ChatGPT needs advertising revenue in 2026 because subscription and API

income cannot cover the cost of serving more than 800 million weekly

free users. Free-to-paid conversion is under 5%, inference costs are

falling slower than usage is rising (roughly 35% efficiency gains per

year against 70%+ query volume growth), and enterprise gross margin

can't stretch to subsidize consumer free traffic. Ads are the only

revenue engine that grows with free-tier engagement.

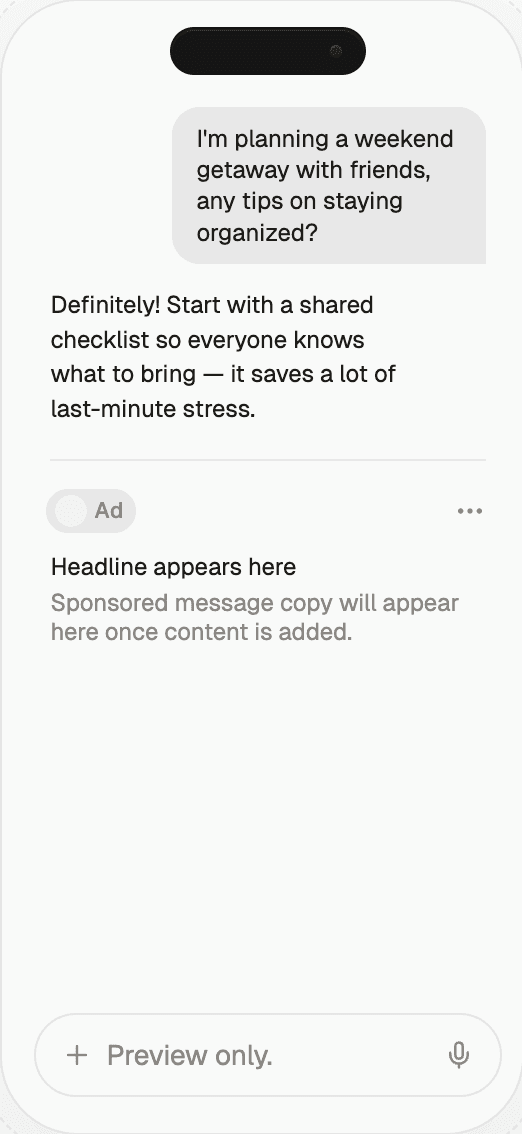

Start monetizing your AI app in under an hour

With Thrad, publishers go from first API call to live ads in less than 60 minutes. With fewer than 10 lines of code required, Thrad makes it easy to unlock revenue from your conversational traffic the same day.

Why ChatGPT Needs Ad Revenue — 2026 | Thrad

The pivot to advertising was not a strategy choice — it was forced by

arithmetic. Free-tier usage grew faster than paid conversion, inference

costs stayed stubborn, and API margins are structurally thin. Ads are

the only additive revenue engine that scales with free traffic.

ChatGPT needs advertising revenue because the cost of serving more than

800 million free users every week now outstrips what subscriptions and

API revenue can cover on their own. It's an arithmetic problem, not a

philosophical one — free-tier compute is real money, conversion to paid

is low single digits, and the only additive revenue engine that scales

with free traffic is ads. This article walks through the structural

math: the compute gap, the conversion ceiling, the API margin squeeze,

and why 2026 was the year arithmetic forced a decision that had been

deferred since 2023.

What is the structural problem?

ChatGPT runs a two-sided economy where one side pays and the other

doesn't. The paid side — Plus at $20/mo, Pro at $200/mo, Team,

Enterprise, API — generates roughly $12B of annualized revenue in

2026. The free side generates the majority of usage, nearly all of the

compute cost, and none of the direct revenue. In 2023 this gap was

manageable because free-tier usage was small relative to paid. In 2026,

with free-tier users above 800 million weekly actives, the gap has

inverted: free usage is the dominant cost driver.

The revenue math has to balance somehow. OpenAI's realistic options

reduce to four:

Cut free usage — damages growth, trust, and the consumer

moat that justifies the valuation in the first place.Raise paid prices — suppresses conversion more than it

raises revenue at the $20 price point, based on public modeling.Monetize free users directly — advertising, the subject of

this article.Hope inference costs fall faster than usage grows — the bet

most optimists made in 2023-2024. It didn't pay off.

Option three is the arithmetic answer. The rest of this article

explains why.

How big is the free-tier compute bill actually?

Public estimates put OpenAI's 2026 free-tier serving cost in the

$3-5B annualized range — larger than any other operating line item.

A GPT-4o-class answer costs roughly 1-4 cents in marginal inference,

with fully-loaded cost (including GPU depreciation, electricity,

network, and R&D amortization) typically 2-3× that. Multiply by the

low-billions of weekly free-tier queries and you get a serving bill

that rivals or exceeds OpenAI's total subscription revenue.

The cost structure decomposes roughly like this (directional, based on

public analyst modeling):

Cost component | Share of total inference cost | Notes |

|---|---|---|

GPU compute (H100/H200/Blackwell class) | 45-55% | The dominant line; amortized over usage |

Electricity | 10-15% | Rising with datacenter scale and heat density |

Datacenter overhead | 10-15% | Cooling, networking, physical plant |

R&D amortization | 10-20% | Training runs spread over inference revenue |

Storage and networking | 5-10% | KV cache, model weights, egress |

Inference cost is falling — GPUs get faster, models get more efficient,

quantization and caching improve. But that trend runs against the

other dynamic: usage is rising faster. Per-query cost trends down

roughly 30-40% per year; total queries have been rising 70%+ per year.

Net: total cost is still trending up. That's the dynamic that breaks

the optimistic 2023 thesis that "inference will get cheap faster than

usage grows."

The moment that dynamic became undeniable — inference efficiency

gains of ~35% per year against query volume growth of ~70% per year —

was the moment advertising moved from "someday, maybe" to "2026

roadmap." The crossover isn't philosophical. It's a spreadsheet.

Why won't subscription revenue close the gap on its own?

The fundamental limit is conversion. Freemium products across a

generation of consumer software land somewhere between 2% and 8%

free-to-paid conversion at scale — Spotify, Dropbox, LinkedIn, YouTube

Premium, Evernote, and Notion all cluster in that band. ChatGPT is in

the same range, reportedly 3-5%. That ceiling is not a product problem;

it's a consumer-behavior constant.

Which means every new free-tier user added to the top of the funnel

brings marginal cost immediately and has roughly a 3-5% chance of ever

converting. The cost-to-conversion ratio cannot be closed by better

marketing. It can only be closed by a second revenue engine that

monetizes the 95%+ who won't upgrade.

Raising the $20/mo price is similarly limited. The $20 anchor is

deliberately calibrated to sit alongside Netflix, Spotify Premium,

Google One, and Apple One — subscriptions consumers treat as

below-friction. Public elasticity modeling suggests that raising to

$25/mo would suppress new paid conversions by 15-25%, leaving OpenAI

with either roughly flat revenue or slight decline. A move to $30/mo

fares worse in the models. The $20 price point is probably optimal

on a revenue-maximizing basis.

Why isn't the API the answer either?

API revenue is growing fast — likely 100%+ YoY in 2026, reaching an

estimated $1.5-2B annualized — but carries a different economic

problem: margin compression. OpenAI competes on API pricing with

Anthropic, Google, open-weights models from Meta/Mistral/DeepSeek, and

a long tail of inference providers that host open weights at 60-90%

below frontier pricing. To stay competitive, gross margin per API

token is compressed — high volume, low unit contribution.

The API margin profile looks something like this:

API tier | Approx gross margin | Notes |

|---|---|---|

Premium (GPT-5, o-series) | 60-70% | Small volume share, high unit contribution |

Workhorse (GPT-4o, 4o-mini) | 40-50% | Largest volume, margin squeezed by open-weights |

Commodity (4o-mini, embeddings) | 20-35% | Race to bottom vs open-weights hosts |

Useful revenue, but not enough to cross-subsidize billions of free-tier

consumer queries on its own. And the competitive dynamic means the

spread only compresses from here.

How does each revenue stream scale with free users?

This is the decisive table. Only advertising grows with free-tier

engagement. Every other revenue line is indifferent to or negatively

correlated with free usage scale.

Revenue stream | Growth rate (2026) | Gross margin profile | Scales with free users? |

|---|---|---|---|

Consumer subs (Plus/Pro) | Plateauing, 20-30% YoY | Healthy per user (~70%) | No — capped by conversion ceiling |

API | Fast, 100%+ YoY | Thin (40-50% blended) | No — different audience |

Enterprise seats | Steady, 50-80% YoY | Highest (~75-85%) | No — separate P&L |

Advertising | Fastest, 300%+ YoY from a low base | TBD — likely 70%+ at scale | Yes — linearly |

Licensing deals | Moderate, 40-60% YoY | Mixed | Partial — indirect |

The last column is the decisive one. The free-tier cost problem needs

a revenue line that grows with free usage. Advertising is the only one

on the list that qualifies.

Every consumer internet company that reached ChatGPT's free-tier

scale eventually landed on an ad-supported model — not because the

founders wanted ads, but because advertising is the only known way

to monetize billions of people who use a service and never pay.

ChatGPT is following the same gravity.

What changed in 2025 and 2026 to force the decision?

Three concrete shifts made the advertising decision inevitable in

2026 rather than 2027 or 2028.

Usage scale crossed an inflection point. Weekly free-tier users

crossed 500 million in mid-2025 and 800 million in early 2026, making

the serving bill impossible to absorb without either ads or material

rate-limit cuts. At 500M WAU the serving cost is uncomfortable; at

800M+ it becomes a dominant line that forces a strategic response.

Frontier-model costs stayed stubborn. GPT-5 and its tier of models

did not produce the step-change reduction in serving cost that some

analysts projected. Efficiency improved roughly 30-40% year over year,

but not fast enough to offset the 70%+ growth in query volume.

Architectural improvements (MoE, caching, speculative decoding)

delivered real but incremental gains.

Investor pressure solidified. Moving toward a public offering or

a higher private valuation required a credible path to positive

consumer-tier unit economics, and advertising is the only lever that

delivers it inside a reasonable timeframe. Discussions around tender

offers and potential IPO structures through 2025-2026 made the

unit-economics conversation urgent in a way it hadn't been when OpenAI

was purely private and funded by strategic capital.

What does the free-tier unit economics math look like?

The easiest way to see why ads became inevitable is a per-user

contribution math. Consider a hypothetical weekly free-tier user in

2026.

Average weekly queries per free user: estimated 10-30.

Average cost per query (fully loaded): 1.5-4 cents.

Weekly cost per free user: 15-120 cents, midpoint ~50 cents.

Annualized cost per free user: $8-60, midpoint ~$25.

Multiplied by 800M weekly actives, the midpoint implies roughly $20B

annualized in serving cost across all free users — clearly higher than

the $3-5B often-cited figure because this math ignores the roughly 4×

efficiency OpenAI achieves through caching, routing to smaller models,

and batching. Apply that efficiency discount and the real number

rounds to $3-5B — the commonly cited estimate.

Against that $3-5B annual cost, OpenAI needs an incremental revenue

line of similar magnitude to move consumer-tier economics into the

black. Advertising at even $5-10 per free user annualized would close

the gap. That's comparable to what Google and Meta extract from free

users on less-engaged surfaces, making it plausible but not trivial.

Why was "no ads" not a sustainable position?

Sam Altman publicly expressed skepticism about advertising several

times between 2023 and 2024, framing ads as potentially misaligned

with user interest. The position was defensible while OpenAI's free

tier was small relative to paid. It stopped being sustainable when

free became the dominant cost driver.

Two reframes made the policy shift easier. First, commercial-intent

queries are genuinely useful with ads — a user asking "best

noise-canceling headphones under $300" is already in a buying posture,

and a well-targeted sponsored result is arguably closer to the user's

goal than an answer that refuses to acknowledge commerce. Second,

the ads-vs-no-ads binary was never the real choice — the real

choice was ads vs harder rate limits, shrinking free access, or

converting toward a Claude-like enterprise-heavy mix that would

forfeit the consumer brand.

Common misconceptions

"OpenAI is profitable, so ads are just greed." Not accurate at

the consolidated product level. Subscription + API margin does not

cover training, free-tier serving, and R&D combined as of early 2026.

Reported operating losses in the single-to-low-double-digit billions

persist."Microsoft's investment covers the costs." Microsoft funds

compute capacity and equity, not operating expenses. Revenue still

has to pay for serving users."If they just raised Plus to $30/mo this would be fixed." Modeling

doesn't support this. The price elasticity kills more upgrades than

the extra $10 generates in most scenarios."Ads betray the original mission." The mission-vs-economics

tension is real, but the alternative to ads is a smaller, more

gated ChatGPT — which arguably conflicts more with the mission of

broad access than advertising does."Inference will just get cheap enough to make ads unnecessary."

Thirty-plus months of empirical data say otherwise. Usage growth

outstrips efficiency gains; the crossover point where inference

becomes a non-factor is not visible in the current trajectory.

What comes next for the ad business?

Expect ad revenue to grow fastest through 2026 and 2027, compounding

from a sub-$500M base toward single-digit billions by 2028 on analyst

models. Expect the free tier to remain genuinely useful rather than

being gated harder — because ads make a generous free tier sustainable.

Expect API pricing to keep compressing, which reinforces the need for

advertising as the consumer-side engine. And expect sponsored search

and shopping to be the first large formats, with conversational

placements emerging more slowly because of trust-cost concerns.

Two second-order effects worth anticipating. First, the ad revenue

will likely fund a more generous free tier, not a more restricted one

— the opposite of what some critics predicted. Second, an advertising-

funded free tier changes OpenAI's strategic posture toward Google: the

two companies are now revenue-model peers, competing for commercial-

intent queries with similar monetization.

How to act on this as a brand

The structural argument matters for brand planning because it tells

you ads aren't a reversible experiment. They are now a permanent layer

of how generative surfaces make money, which means visibility inside

ChatGPT, Perplexity, and Gemini is a durable channel — not a fad.

Brands that start building measurement and placement capability now

will have a 12-24 month lead when self-serve auctions ship and the

category opens up.

Thrad helps brands measure that visibility and place ad inventory

inside generative surfaces where paid formats exist today. If the

economics aren't going away, the measurement infrastructure to work

with them shouldn't be improvised.

openai economics, chatgpt free tier cost, chatgpt ad model, openai profitability

Citations:

The Information, "OpenAI revenue hits $12B annualized in Q1 2026," 2026. https://theinformation.com

SemiAnalysis, "GPU economics of frontier model serving," 2025. https://semianalysis.com

CNBC, "OpenAI free-tier usage crosses 800M weekly users," 2026. https://cnbc.com

The Verge, "OpenAI begins testing paid search placements in ChatGPT," 2026. https://theverge.com

Stratechery, "The inevitability of ad-supported generative AI," 2025. https://stratechery.com

Bloomberg, "OpenAI's consumer unit economics under pressure," 2026. https://bloomberg.com

Wall Street Journal, "Inside OpenAI's free-tier serving cost," 2026. https://wsj.com

Be present when decisions are made

Traditional media captures attention.

Conversational media captures intent.

With Thrad, your brand reaches users in their deepest moments of research, evaluation, and purchase consideration — when influence matters most.

Date Published

Date Modified

Category

Advertising AI

Keyword

why chatgpt needs advertising revenue