Measure generative AI advertising ROI by combining a named baseline,

a chosen incrementality method (geo hold-out, ghost ads, or matched

markets), and full-cost accounting that includes prompt, review,

compliance, and tooling. Report lift, decay curve, and cost per

approved asset side by side. Brands that do this get finance to fund

pilot two; brands that report platform ROAS only get audited and

shelved by Q3.

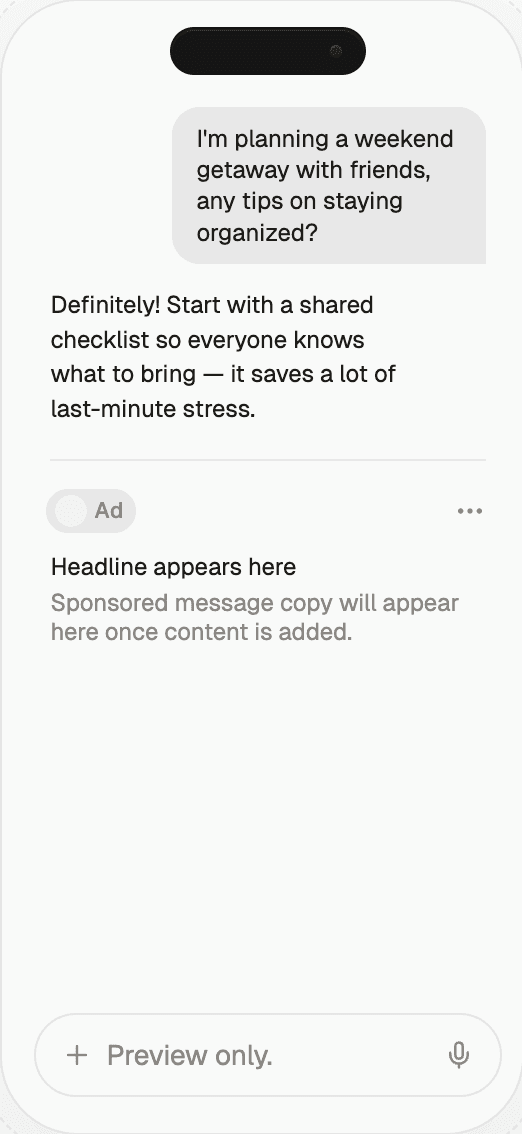

Start monetizing your AI app in under an hour

With Thrad, publishers go from first API call to live ads in less than 60 minutes. With fewer than 10 lines of code required, Thrad makes it easy to unlock revenue from your conversational traffic the same day.

Generative AI Advertising ROI Measurement | Thrad

ROI measurement is what separates real generative AI advertising

programs from press releases. Honest measurement means naming the

baseline, picking an incrementality method, accounting for human-

in-the-loop cost, and reporting the decay curve — not the peak.

Generative AI advertising ROI measurement is where 2026 programs

succeed or fail internally. The technology works; the measurement

often doesn't. This article is a practical framework for measuring

ROI honestly enough to win finance funding for pilot two — covering

baselines, incrementality methods, full cost accounting, decay

analysis, and the specific reporting patterns that survive a CFO's

follow-up questions. The guidance below assumes you already have

generative creative shipping; it is the measurement layer that most

teams realize too late they under-built.

What does generative AI advertising ROI measurement mean?

ROI measurement for generative AI advertising is the practice of

answering three questions, clearly and repeatedly, with evidence a

finance organization will accept. Every honest program answers:

What lift did AI-assisted work deliver vs. non-AI work?

What did it cost, all-in, to produce and run that work?

Was the lift incremental to other channels, or did it cannibalize

them?

Teams that answer all three get funded. Teams that answer only the

first — the flashy lift number — get audited and stalled. The 2026

WARC survey of CFOs at top-200 advertisers found that 68% had

deprioritized or frozen at least one AI marketing pilot in the prior

twelve months specifically because measurement was "platform-only" or

"incrementality-silent." Honest measurement is not a nice-to-have;

it is a funding gate.

How do you name the baseline?

Before the campaign runs, name the baseline out loud. The baseline

is the counterfactual you will compare against — the question "lifted

versus what?" A strong 2026 program writes the baseline into the

campaign brief so it cannot be retrofitted after results are in. Four

common baselines:

Pre-period match. Same campaign, same audiences, previous

quarter. Weakest baseline but the most common. Vulnerable to

seasonality and market drift.Parallel non-AI cell. Half the audience gets hand-made creative,

half gets AI-assisted. Strongest baseline, highest operational cost

— you are running two campaigns in parallel.Hold-out geos. Some markets get AI; some don't. Clean when you

have enough markets (typically 20+ at the US DMA level).Randomized user hold-out. Within a single market, a control

group is excluded from AI-assisted exposure. Requires DSP or

platform cooperation and sufficient sample.

Document the baseline in writing, with numbers, before day one. The

2026 IAB Tech Lab attribution guidance explicitly flags

"post-hoc baseline selection" as the leading cause of measurement

disputes in AI advertising programs.

How do you pick an incrementality method?

Platform-reported ROAS is a starting point, not the finish line.

Choose one true incrementality method and pre-register it. Different

methods fit different campaign shapes:

Method | When it fits | Typical horizon | Power |

|---|---|---|---|

Geo hold-out | National brand, many markets | 8–12 weeks | High |

Ghost ads | Programmatic direct buy | 4–6 weeks | Medium-high |

Matched markets | Brand, limited geography | 10–14 weeks | Medium |

Converted control | Retail media, store-level | 6–10 weeks | High |

Conversion lift study | Platform-owned inventory | 4–8 weeks | Medium |

The method choice shapes everything downstream — sample size, duration,

cost, and the kind of claim you can make at the end. Don't change it

mid-campaign, because a mid-flight method change forfeits the

incrementality claim entirely. If you must switch, treat the first

leg as a planning pilot and start the clock over.

How do you account for human cost honestly?

Most inflated ROI numbers assume AI is "free." It isn't. The

Forrester 2026 creative-operations benchmark put all-in human cost

per approved AI-assisted asset at roughly 35–55% of the comparable

hand-made asset — real savings, but nothing like the "90% cheaper"

claims that appeared in vendor decks in 2024. Line items that belong

in the cost column:

Creative direction and brief writing (senior hours).

Prompt engineering and iteration (specialist hours).

Brand spec maintenance (ongoing ops hours).

Review and approval (creative lead, brand reviewer, legal).

Compliance and disclosure work (regulated categories).

Tooling amortization (generation, prompt library, audit-log

storage, measurement platforms).Model inference cost (if you pay per-token or per-image).

Opportunity cost of the humans sitting in the loop.

Divide total campaign cost by the number of approved assets, not

generated candidates. Most teams generate 5–10x what they ship; only

the shipped count matters for unit economics. Reporting cost per

generated candidate inflates apparent efficiency by an order of

magnitude and is a classic tell that a program hasn't survived

finance review.

Why should you separate creative lift from placement lift?

Generative AI creative (new variants, new formats) and generative-

surface placement (appearing inside ChatGPT, Perplexity, Gemini

answers) are different interventions with different mechanics,

different audiences, and different measurement methods. A campaign

using both should measure them apart:

Creative lift — A/B within the same surface, new AI-assisted

creative vs. hand-made baseline.Placement lift — new surface exposure vs. no exposure, typically

via geo hold-out or matched markets.

Blending them produces numbers no reviewer believes. If AI-generated

creative lifts a programmatic campaign 12% and a Perplexity placement

lifts awareness 18%, the honest report shows both. Summing the two

into "AI program lifted 30%" erases the mechanism and invites exactly

the finance follow-ups the number was supposed to avoid.

How do you report the decay curve?

Peak lift tells you the creative is fresh. The decay curve tells you

whether the program scales. A healthy 2026 generative AI program

typically shows a strong first 2–3 weeks, a tail at 30–60% of peak

through weeks 6–8, then a refresh step. The curve shape itself is the

deliverable, not a single number.

Week | Typical lift (% of peak) | Action |

|---|---|---|

1–2 | 100% | Launch, monitor |

3–4 | 75–90% | Steady state |

5–6 | 50–70% | Plan refresh |

7–8 | 30–50% | Refresh triggered |

9+ | Reset to new peak with new creative | New baseline |

Report lift, decay, and refresh cadence together. A 35% peak with a

steady 20% tail across 12 weeks is often more valuable than a 60%

peak that decays to zero by week four. Finance understands curves;

they distrust single numbers.

The difference between a well-measured generative AI advertising

program and a badly measured one isn't the number at the top of the

slide. It's whether finance can ask three follow-up questions and

get clean answers: baseline, incrementality, full cost.

How do you cut by generative surface?

Generative-surface inventory doesn't behave uniformly. Citation rates

and click-through patterns differ across ChatGPT, Perplexity, Gemini,

Copilot, and embedded AI formats in Meta and Google. The 2026

eMarketer surface-level benchmarks show citation rates varying 3–5x

across surfaces for the same brand in the same category. Report each

surface on its own line.

Surface | Dominant buying model | Typical measurement lag |

|---|---|---|

ChatGPT | Sponsored answer + shopping | 1–2 weeks to stable signal |

Perplexity | Sponsored answer + sources | 1 week |

Gemini | Sponsored card + presence | 2 weeks |

Copilot | Embedded assistant placement | 2–3 weeks |

Meta AI | In-feed generative creative | Near real-time |

Google AI Overview | Citation + deep link | 1–2 weeks |

Blend surfaces only for executive summaries — never for planning.

Planning requires surface-level detail because budget decisions differ

per surface.

Common misconceptions

"AI programs should show triple-digit lift." In well-measured

programs they usually don't. Mid-teens to mid-twenties lift against

a clean baseline is a good result. Triple-digit numbers are

typically baseline artifacts."Cost per generated asset is the right unit." It isn't. Cost

per approved, shipped asset is the only unit finance cares about."Platform ROAS plus vibes is enough." Not in 2026. Every major

brand now has a CFO-mandated incrementality cut, and Ad Age

reporting shows 40%+ of Fortune 500 advertisers added formal AI-ROI

audit lines to 2026 plans."Measurement can be wired after we launch." No — baselines set

post-hoc are uninterpretable. Wire before launch or don't claim

incrementality."Bigger lift means better program." Only against a clean

baseline. A 90% lift with a broken baseline is weaker evidence than

a 15% lift with a pre-registered hold-out.

What comes next

Three shifts are already visible and will reshape AI-ROI measurement

through 2026-2027. First, independent measurement providers are adding

native generative-surface tracking, so placement ROI can be cut

against the same standards as display and CTV. Second, boards and

audit committees are asking CMOs to show generative AI ROI as a

standing line item in quarterly reviews — the days of footnoted AI

pilots are ending. Third, the IAB Tech Lab's 2026 Attribution

Practices draft is pulling generative-surface metrics into the same

framework as classical channels, which will make cross-channel

measurement merely hard rather than impossible.

The operational consequence: measurement shifts from a post-campaign

report to a pre-campaign gate. A brand that cannot produce a

baseline, method, and cost plan at kickoff will increasingly find

budget blocked at that stage rather than funded and audited later.

What does a measurement stack that survives finance review look like?

A measurement stack survives finance review when each number in the

deck has a traceable source, a named method, and a counterfactual.

The reference 2026 stack has five components that talk to each other:

Experiment design — the baseline and method, pre-registered,

with the expected sample size and detectable effect written down.Data pipeline — unique IDs attached to every variant, flowing

end to end from generation to conversion.Incrementality engine — geo matching, synthetic controls, or

conversion-lift studies run on a schedule, not ad-hoc.Surface visibility layer — for generative surfaces, a monitor

that tracks brand citation and sentiment across ChatGPT,

Perplexity, Gemini, and Copilot on the queries that matter.Finance reconciliation view — full cost schedule tied to

approved-asset count, rolled up to campaign, program, and quarter.

When those five components share identifiers and time ranges, a

finance partner can reconstruct any claim in the deck without asking

you to pull another report. That reconstructability is what finance

reviewers actually mean when they ask for "rigor."

Common pitfalls in the first 90 days

Most programs fail measurement review in the first quarter for

predictable reasons. Naming them in advance shortens the learning

curve:

Wrong baseline. Picking the strongest pre-period instead of a

representative one.Shifting method. Promising a geo hold-out, running a platform

lift study instead.Incomplete cost. Leaving out legal review, spec maintenance,

and tooling amortization — the line items least visible to the

marketing team.Silent cannibalization. Not instrumenting whether AI creative

drew budget or attention from other channels.Single-surface thinking. Reporting a program as "AI advertising"

when spend splits across three surfaces with different dynamics.No refresh trigger. Letting decay erode lift without a

committed response, then blaming the model.

Each pitfall is a measurement discipline failure, not a creative

failure. Catching them at planning is trivial; catching them at the

Q3 review is expensive.

Finance partners do not reject AI advertising claims because the

numbers are small. They reject them because the numbers cannot be

reconstructed. Reconstructability — not magnitude — is the standard

a measurement stack has to hold itself to.

How do you stand up honest ROI measurement?

Pick one campaign. Name the baseline on paper before day one.

Pre-register the incrementality method in writing with the finance

partner. Account for every hour and every tool in a full cost

schedule, divided by approved assets. Report decay alongside peak

with a pre-committed refresh trigger. Cut by surface in the

dashboard. Separate creative lift from placement lift in the

reporting. Sit down with finance before launch to agree the three

questions — baseline, incrementality, full cost — and the answer

format you'll deliver.

Build the five-component stack described above even if parts of it

start as spreadsheets. The discipline of the stack matters more than

the sophistication of the tools. Programs that start with a

whiteboard experiment design and a finance-shared cost tracker

graduate to more advanced tooling in 6–12 months; programs that

start by buying a measurement platform without the discipline

underneath typically run the same bad measurement faster.

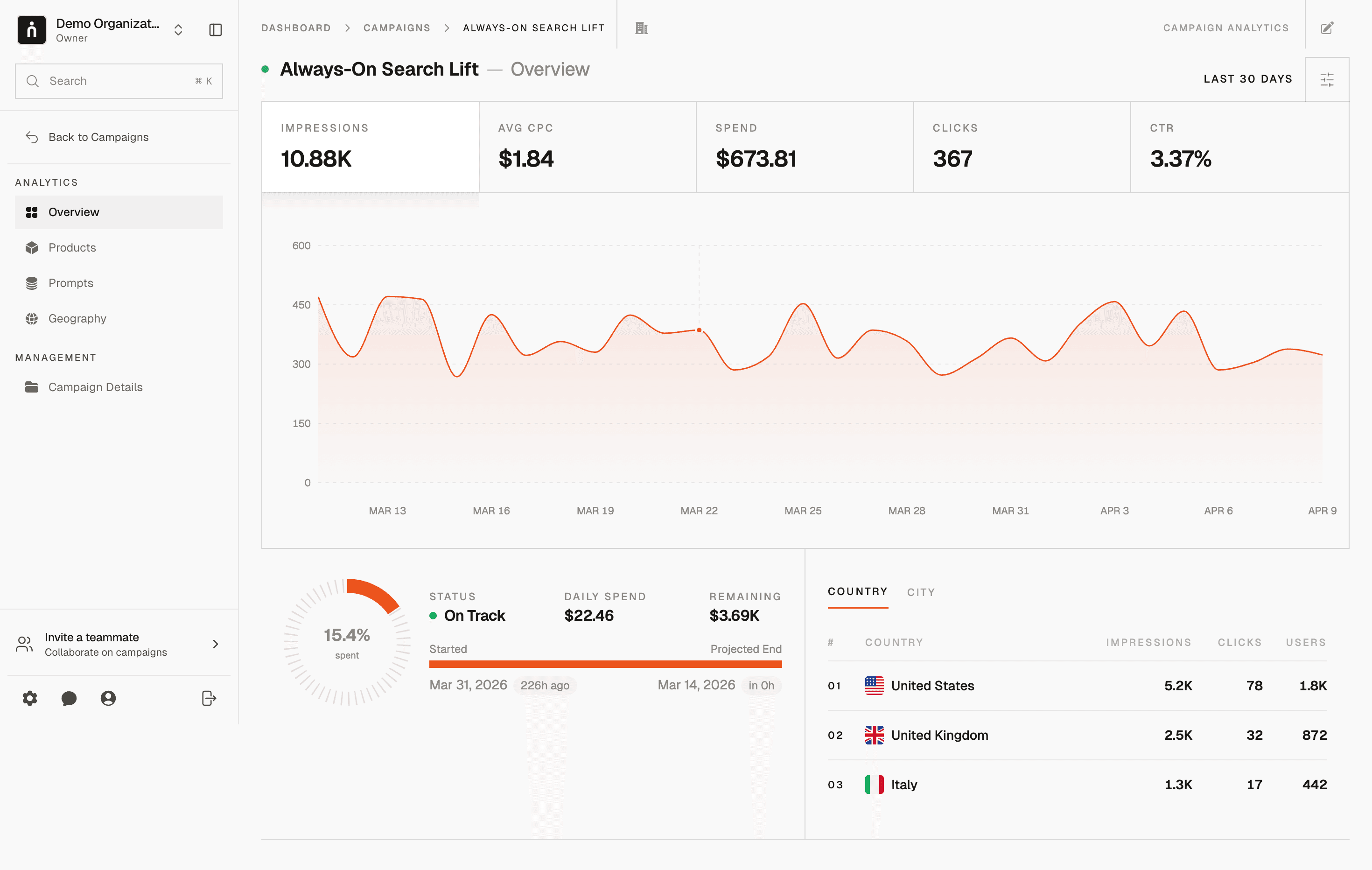

Thrad provides the generative-surface visibility cut — what an

audience sees about your brand inside ChatGPT, Perplexity, and Gemini

— which is the half of the measurement stack most brands still don't

have. Pair it with a DSP-side incrementality test and a full cost

schedule and you have the complete picture finance will actually fund.

ai ad roi, generative ai advertising measurement, ai marketing roi, ai ad incrementality

Citations:

WARC, "Incrementality and Generative Advertising," 2026. https://warc.com

IAB Tech Lab, "Attribution Practices for Generative Campaigns," 2026. https://iabtechlab.com

eMarketer, "AI Ad Spend Forecast 2026," 2026. https://emarketer.com

Ad Age, "CFOs are auditing AI ad claims," 2026. https://adage.com

Gartner, "Marketing Measurement Benchmarks," 2026. https://gartner.com

Forrester, "Incrementality Methods for AI Programs," 2026. https://forrester.com

Be present when decisions are made

Traditional media captures attention.

Conversational media captures intent.

With Thrad, your brand reaches users in their deepest moments of research, evaluation, and purchase consideration — when influence matters most.

Date Published

Date Modified

Category

Advertising AI

Keyword

generative ai advertising roi measurement