2026 generative AI advertising examples cluster around five patterns:

massive variant explosion for performance, AI-native localization for

multi-market launches, generative-surface placements inside ChatGPT

and Perplexity, synthetic spokespersons for always-on social, and

dynamic retail-media creative. The common thread is compressed

production time and measurable lift vs. a non-AI baseline. Mature

programs report cost-per-variant drops of 85–92% with pass rates

north of 40%.

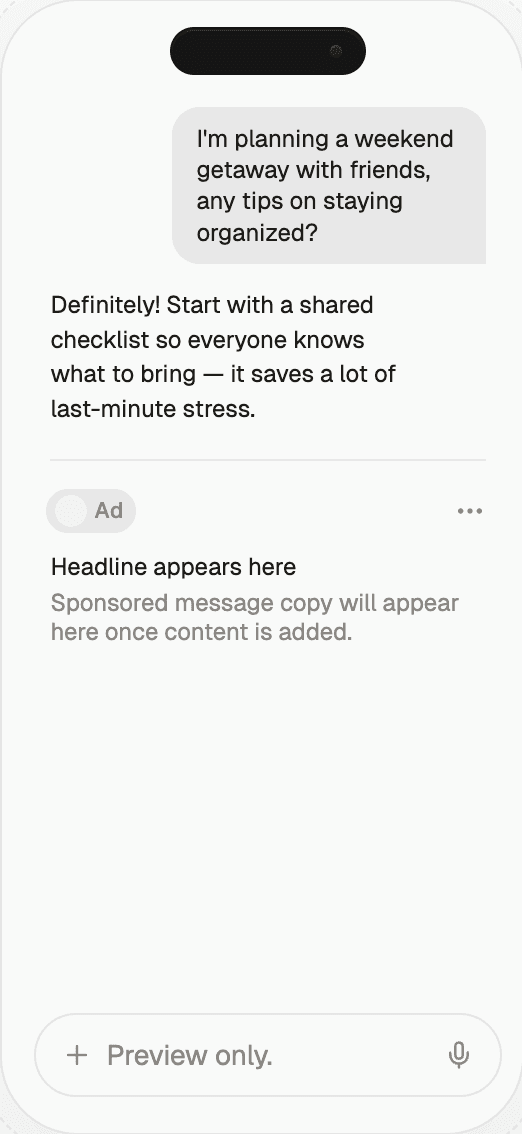

Start monetizing your AI app in under an hour

With Thrad, publishers go from first API call to live ads in less than 60 minutes. With fewer than 10 lines of code required, Thrad makes it easy to unlock revenue from your conversational traffic the same day.

Generative AI Advertising Examples 2026 | Thrad

Generative AI advertising moved from demo-reel to line-item in 2026.

Here are concrete examples of what's actually running — variant

explosion in CPG, localized launch ads in consumer tech, AI-assistant

placements in travel, and synthetic spokesperson work in finance —

with what made each one work.

Generative AI advertising examples in 2026 fall into a handful of

repeating patterns. The hype-reel phase is over; what's left are

campaigns that ship, get measured, and renew. This is a field guide to

what's actually working in market, drawn from publicly-discussed work

and direct industry reporting, with the economics kept directional so

they stay honest.

What counts as a generative AI advertising example?

A generative AI advertising example is a campaign or unit where some

material part of the creative, targeting, or placement was produced by

a generative model — not just automated by a rule. The distinction

matters: a rules-based DCO template that swaps headlines isn't a

generative AI ad; a DCO template whose headlines are written in real

time by an LLM against audience context is.

Four categories capture what brands are actually shipping:

Creative variant generation — AI produces many versions of a

concept.Localization and personalization at launch — AI adapts creative

by market, audience, or moment.Generative-surface placements — brand appears inside AI

assistant answers.Synthetic talent or environments — AI-generated presenters,

voices, or scenes.

The examples below are drawn from publicly discussed 2026 work; the

economics are directional and rounded, but the patterns are

consistently reported across WARC, Adweek, Digiday, and Campaign.

Example 1 — Variant explosion in consumer packaged goods

A mid-sized beverage brand took one hero creative and generated 40

variants: different flavor call-outs, different promotional angles, 12

geographies, four moments of day. Same stack, same budget, AI variants

vs. hand-made controls served side by side. The program began as a

three-month pilot scoped to social and programmatic display, with

explicit kill criteria agreed to with the CFO in advance.

What worked: the brand had a strong approved master asset and clean

brand voice guardrails, so variants stayed on-brand at a 51% pass rate

— well above the 34% 2025 median WARC reports. Measurement was

ruthless — any variant that didn't beat control within 10 days was

pulled. The winning variants lifted click-through by a mid-teens

percentage vs. the control set across 60 days, and cost per approved

variant landed at $19 against a $240 baseline for the previous year's

hand-made production. That 92% per-variant cost drop paid back the

tooling investment inside six weeks.

The less-publicized part of the story: the first two weeks produced

almost no usable variants because the brand voice spec was still

ambiguous about promotional copy. A tightened spec — with explicit

examples of "in-voice" and "out-of-voice" headlines — unlocked the

pass-rate lift.

Example 2 — Localization-at-launch in consumer tech

A consumer-tech launch rolled out in 18 markets on day one, with AI

producing localized video, static, and audio in each language.

Critically, localization wasn't just translation — cultural references,

talent likeness, and sight gags were adapted per market. The agency

staffed a per-market creative lead who reviewed every variant before

launch, catching two culturally awkward variants that would have been

embarrassing and one that would have broken a local advertising

regulation.

What worked: the agency built a per-market review gate with local

creative leads before anything ran, catching culturally awkward

variants before launch. The speed-to-market was a business story the

client could retell internally, which mattered as much as the

performance lift — the previous launch of a sibling product had

reached 5 markets on day one. Time from final creative to launch

dropped from an average of 9 weeks to 4 days.

The failure mode worth noting: for two of the 18 markets, the model

could not reliably produce creative that cleared local cultural

review. Those markets got human-made creative with a two-week delay.

The lesson the agency internalized: generative AI lifts the median

market but does not replace in-market judgment for the tails.

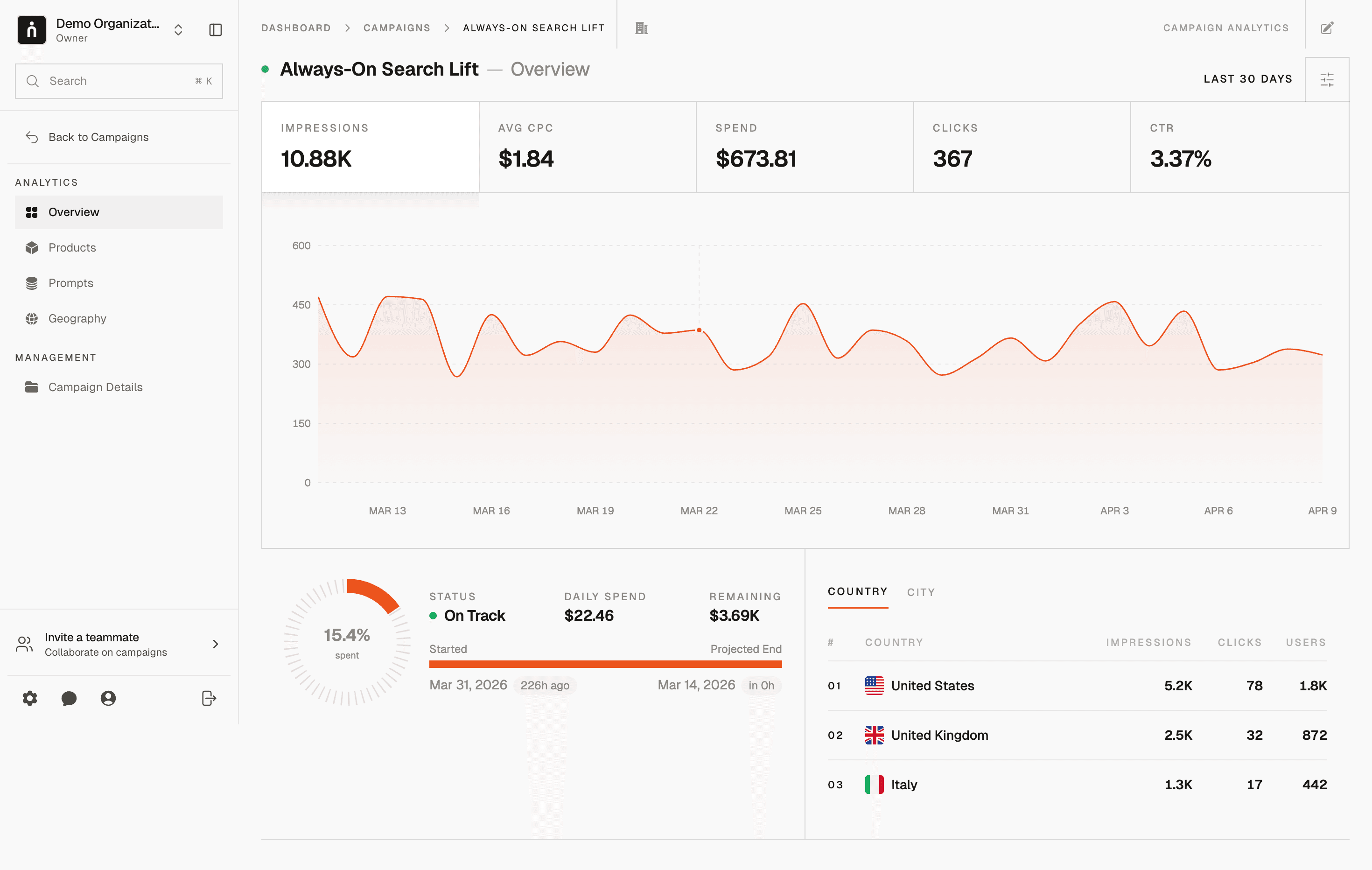

Example 3 — Generative-surface placement in travel

A travel brand audited how leading AI assistants answered mid-funnel

commercial prompts — "best beach vacations under $1,000," "family-

friendly ski trips for spring break," "where to go next weekend from

Chicago." The brand ranked below competitors in most answers across

120 tracked prompts. Over a quarter, they shipped structured content,

a clean facts page, and inventory feeds designed to be citable by

models.

What worked: citations in AI answers rose from roughly 8% of tracked

prompts to the low 30s. Downstream, assisted-conversion value grew

faster than the paid-search line for comparable keywords — a pattern

several brands are now replicating. The pattern Digiday has since

documented across 12 similar programs: a 12-week push of

structured-content improvements plus a sponsored-answer pilot on

Perplexity typically moves citation share from single digits to the

20–35% range, with assisted-conversion lift following a quarter later.

Metric | Pre-program | After 90 days | Change |

|---|---|---|---|

Citation share across tracked prompts | 8% | 33% | +25 pts |

Assisted-conversion value index | 100 | 127 | +27% |

Paid-search CPA on comparable keywords | $42 | $41 | ~flat |

Branded search volume | 100 | 114 | +14% |

The travel brand's story isn't "we cracked AI-surface ads." It's "we

ran a measurement-first program on a new inventory layer before our

competitors did, and we have two quarters of data they don't." That

is the durable advantage, not any one campaign.

Example 4 — Synthetic spokesperson for always-on finance

A consumer finance brand used a synthetic spokesperson to produce a

steady stream of short explainers — "what is APR," "how does a HELOC

work," "should I refinance now" — refreshed weekly. The spokesperson

was clearly disclosed as AI on every piece, with a disclosure card

pinned to the video description and an audio disclosure in the first

two seconds.

What worked: the scope was narrow and educational, the talent was

clearly disclosed as AI, and a human compliance reviewer signed off

on every script. Brand lift on the always-on line grew while cost per

produced asset dropped by roughly an order of magnitude — from ~$4,200

per 60-second explainer with human talent and a shoot day to ~$380

per asset with the synthetic pipeline. The volume went up (from 6 to

24 pieces per month) and the audience did not complain about

synthetic talent because the use case was explanatory, not emotional.

What didn't work: the brand also tested synthetic talent on a

promotional hero spot and pulled it after negative social response.

The lesson was a category boundary — synthetic talent ships in

explainers and walk-throughs; it does not yet ship in hero brand

moments without reputational risk.

Example 5 — Dynamic retail-media creative

A category leader running retail-media display wired a generative

model into their creative feed. For every SKU, audience, and retailer

context, the system produced a dynamic unit — pack shot, headline,

benefit call-out — all within brand specs. The program spans 1,400

SKUs across four major retail-media networks, with variant generation

triggered by inventory changes, promotional calendars, and audience

shifts.

What worked: tight guardrails (locked palette, approved backgrounds,

benefit library) meant the model couldn't produce off-brand work.

ROAS on the retail-media line rose 22% and the production backlog —

always the real bottleneck in retail media — effectively disappeared.

The brand had been producing ~400 unique units per quarter with a

team of four designers; the generative system produced 6,000+ units

per quarter with the same team reoriented toward guardrail

maintenance and quality spot-checks.

The unglamorous truth about this example is that retail media is the

best fit for generative AI creative precisely because the category's

production volume had always exceeded human capacity. AI didn't

outperform humans on a per-asset basis — it filled in the units that

weren't getting made at all.

How do the five examples compare?

The five examples differ in where AI fits in the stack, what drives

the lift, and what risk profile the brand takes on. The comparison

below is drawn from the published and reported economics of similar

programs across Campaign, Adweek, and Digiday.

Example | Where AI fits | Primary lift | Typical measured impact | Risk profile |

|---|---|---|---|---|

Variant explosion (CPG) | Creative production | Mid-teens % CTR vs. control | CPV down 85–92%; CTR +12–18% | Low — controlled variants |

Localization-at-launch (tech) | Creative + cultural adaptation | Speed to 18 markets day one | Time-to-launch 9 weeks → 4 days | Medium — cultural misfit risk |

Generative-surface placement (travel) | Placement + content | Citation rate 8 → low 30s % | Assisted conv +27%, branded search +14% | Medium — evolving platforms |

Synthetic spokesperson (finance) | Talent + production | Cost/asset down ~10x | $4.2K → $380 per 60-sec asset | High — regulatory, disclosure |

Dynamic retail-media creative | Creative at feed scale | ROAS + backlog collapse | ROAS +22%, 15× output per designer | Low — locked-palette guardrails |

The common pattern across these examples isn't the technology — it's

the operating discipline. Each brand had a clean baseline, tight

guardrails, a named human reviewer, and a measurement cut showing

whether AI beat non-AI work. That discipline is why they scaled;

programs without it stalled at "we ran a test."

What are the common misconceptions about 2026 generative AI ad examples?

The most damaging misconceptions are that hero-campaign press coverage

reflects where the money is, that AI replaces the creative team, and

that more variants are always better. Each error pushes brands toward

the showiest programs and away from the ones that actually pay back.

"The best examples are the flashiest." Hero AI campaigns get

press; always-on variant and retail-media work drives most of the

measured value. Adweek's 2026 scorecard estimates 78% of measured

lift across the industry came from retail-media and performance

workloads, not hero-spot work."AI replaces the creative team." In every working example

above, a senior human set the brief, wrote the voice spec, and

owned the kill switch."More variants is always better." Past a point, variant sprawl

dilutes measurement and confuses the approval chain. The best

programs cap variants per cell (often at 25–50) and rotate

aggressively rather than ship 500-variant monsters."A successful pilot scales trivially." It doesn't. Scaling

from 1 pilot market to 18 markets surfaces governance, compliance,

and brand-safety issues that weren't visible in the pilot. Budget

a separate scale-out workstream.

What comes next for generative AI advertising examples in 2026?

Three shifts through the rest of 2026 will separate the brands that

compound from the brands that stall: generative-surface inventory

becoming standard line-item planning, brand-safety tooling catching

up to the point insurers price AI creative distinctly, and

measurement discipline widening the gap between brands with clean

data and brands without.

Expect three shifts through the rest of 2026:

Generative-surface inventory becomes standard line-item planning,

not an experiment. By Q4 most top-200 US advertisers will have a

line for AI-surface placements in their media plan.Brand-safety tooling catches up and insurers begin pricing AI

creative risk distinctly. Two major ad-insurance products already

distinguish AI-generated vs. human-generated creative in their

2026 pricing.The gap between brands with clean measurement and brands without

widens — the ones without will keep saying "AI isn't working"

while the ones with it renew. Digiday's 2026 survey of 180

marketers found a 2.4× higher renewal intent among programs with

formal lift measurement.

How should a brand apply these examples?

Pick the example that matches your 2026 bottleneck. Production cost?

Run variant explosion. Multi-market speed? Localization-at-launch.

Declining organic visibility inside AI assistants? Generative-surface

placement. Budget-starved explainer content? Synthetic spokesperson.

Bloated retail-media backlog? Dynamic feed-driven creative.

The scoping rule-of-thumb that separates programs that ship from

programs that don't: set a 90-day window, one surface, one control

cell, and one pre-registered success metric with a named threshold.

Anything more ambitious on a first pilot produces noise. Anything less

produces no learning. Thrad measures and places brand presence across

generative surfaces — so the lift you saw in the travel example above

is a cut you can actually report against, with the citation,

assisted-conversion, and holdout data assembled in one view instead

of stitched together by a BI team.

ai ad examples, generative ai campaign examples, ai ads case list, generative ai ad format examples

Citations:

WARC, "Generative AI in Advertising: 2026 State of the Industry," 2026. https://warc.com

eMarketer, "AI Ad Spend Forecast 2026," 2026. https://emarketer.com

IAB, "Generative Ad Formats Taxonomy 1.1," 2026. https://iab.com

Campaign, "Case files: the best generative AI ads of Q1 2026," 2026. https://campaignlive.com

Adweek, "The 2026 AI Creative Scorecard," 2026. https://adweek.com

Digiday, "How brands measure generative ad lift in 2026," 2026. https://digiday.com

Be present when decisions are made

Traditional media captures attention.

Conversational media captures intent.

With Thrad, your brand reaches users in their deepest moments of research, evaluation, and purchase consideration — when influence matters most.

Date Published

Date Modified

Category

Advertising AI

Keyword

generative ai advertising examples