The 2026 generative AI advertising compliance checklist covers five

operational domains: disclosure (is AI use visible where required),

rights (likeness, voice, training data provenance), claim

substantiation (what the model says about the product), data (how

prompts and personal data are handled), and audit trail (every

approved asset logged). Brands assign a named owner per domain and

run the checklist on every published asset. IAB Europe's 2026

implementation guide and the EU AI Office notes make the review

cadence explicit: per-asset for disclosure, claims, and audit trail;

per-campaign for rights; quarterly for data handling.

Start monetizing your AI app in under an hour

With Thrad, publishers go from first API call to live ads in less than 60 minutes. With fewer than 10 lines of code required, Thrad makes it easy to unlock revenue from your conversational traffic the same day.

Generative AI Ad Compliance Checklist 2026 | Thrad

Compliance for generative AI advertising in 2026 is operational, not

theoretical. Brands need a checklist they can actually run on every

asset — disclosure, rights, claims, data, audit trail — with named

owners and a review cadence. This is that checklist, written to be

operated by a marketing-ops or legal-ops team without a custom build.

Compliance for generative AI advertising in 2026 is not a one-pager from legal. It is a running operational checklist that has to be applied to every asset, every time. IAB Europe's 2026 implementation guide and the EU AI Office's advertising notes make the expectation explicit: per-asset review on disclosure, claims, and audit trail; per-campaign review on rights; quarterly review on data handling. This is the checklist working teams actually operate against.

What is a generative AI advertising compliance checklist?

A generative AI advertising compliance checklist is the set of operational controls that turns a generative creative pipeline into something a brand can defend when asked by a regulator, a consumer, or an internal audit committee. It lists the specific checks to run on every asset, the named owner for each domain, the documented procedure, and the review cadence. The 2026 list covers five domains.

The five domains:

Disclosure — is AI use communicated where required?

Rights — likeness, voice, music, training-data provenance.

Claims — what does the ad say about the product, and how is it substantiated?

Data — how are prompts and personal data handled?

Audit trail — can the brand reconstruct what happened, asset by asset?

Every domain needs a named human owner and a documented procedure. ANA's 2026 legal and regulatory update for AI advertising lists these exact five domains; IAB Europe uses the same structure with slightly different ordering. The convergence is not accidental — these are the five places where 2024–2025 enforcement actions and adjudications actually landed.

What must you disclose, and when?

Disclosure applies where a model is materially the author of the creative. A safe 2026 operating default is to disclose synthetic humans, cloned voices, wholly generated scenes, and AI-generated performance claims. Subtle generative retouching and automated localization without voice cloning typically don't require disclosure. Tighter rules apply in regulated categories (health, financial services, children's content, political).

Creative element | Default disclosure stance | Watch-outs |

|---|---|---|

Synthetic humans (full faces / personas) | Disclose | Right-of-publicity concerns in US; data protection in EU |

Cloned voices | Disclose | Talent contract language; performers' union rules |

Fully generated scenes | Disclose | "Dramatization" labeling conventions still apply |

AI-assisted copy | No disclosure typically | But claims still require substantiation |

Upscaling / restoration | No disclosure typically | Material alteration still triggers |

Style transfer / look-and-feel | Case-by-case | Disclose if average consumer could misread source |

Automated localization (text) | No disclosure typically | Subject to accuracy obligations |

Voice localization with cloning | Disclose | Even if the original performer consented |

The EU AI Office's 2026 advertising implementation notes codify this distinction. FTC 2025 guidance aligns in practice. IAB Tech Lab's v1 standards define the machine-readable tags that accompany the human-visible label. The checklist item: for every asset, answer "does the average consumer correctly understand this as AI-generated where required?"

Which rights do you need to clear before generation?

Rights issues caught post-publish are 10–20x more expensive than rights issues caught pre-brief. The 2026 checklist requires clear rights for every human likeness, every voice, every piece of music or audio, every stock asset, and — newly — for the training data behind the model itself. Ad Age's 2026 compliance reporting documented several seven-figure settlements in 2025 that a pre-brief rights check would have avoided.

Before anything is generated:

Likeness rights for every human shown, real or synthetic. Synthetic humans may still create rights issues if they resemble real people.

Voice rights for every voice used, real or cloned. Cloning requires explicit contractual permission plus, increasingly, performers' union clearance.

Music rights for any audio, whether licensed or AI-generated. AI-generated music vendors vary in the commercial-use rights they grant; check the vendor contract per asset.

Training-data provenance on the model itself — does the vendor warrant the training set? If not, the brand carries the risk of downstream claims from rightsholders.

Background assets — stock licenses, talent releases, trademarks in frame, third-party logos.

A practical checklist line: "for this asset, I can produce contracts or licenses covering every rights holder visible or audible in the output within 24 hours of a request." If that sentence isn't true, the asset isn't ready to ship.

Domain 3 — Claims: what the model can and cannot say

Claim substantiation is the biggest 2026 compliance risk. Models cheerfully invent performance numbers, comparative claims, and clinical-sounding assertions if not constrained. A regulated claim generated by a model, approved without substantiation, and published at scale is the textbook enforcement case. The FTC's 2025 endorsement guidance specifically addresses this scenario, and WARC's regulation tracker documents at least six public enforcement matters globally in the prior 12 months.

Operational controls:

Pre-approved claim library. Models may only produce copy drawn from an approved claims list with linked substantiation. Implementation: retrieval-augmented generation with an approved-claims corpus, plus deterministic filters for claim-pattern language.

Regulated-category allowlists. In pharma, finance, and consumer health, route every claim through a subject-matter reviewer. The reviewer is the gate; the model is the producer.

No unhedged performance claims. "Clinically shown," "9 out of 10," "the #1 X" may not appear unless the study and language are pre-cleared and linked to substantiation.

Comparison claims require comparison substantiation. Every superlative or comparative claim needs a documented, current competitor check and named benchmark source.

No invented statistics. Any number in a generated asset must trace to a specific source in the brand's approved data room.

A model-generated claim without substantiation is the most likely 2026 enforcement action against an AI-assisted campaign. The FTC's 2025 guidance is unusually explicit: the brand remains liable, and "the AI did it" is not a defense.

A useful operational rule: if a generated claim can't be traced to a specific sentence in an approved source document within 60 seconds, it doesn't ship. Teams that enforce this rule ship faster net, because the late-stage surprises disappear.

Domain 4 — Data handling: prompts are regulated data

Prompts are data. Treat them with the discipline you apply to user records. The 2026 checklist requires a lawful basis for any personal data included in a prompt, a data-processing agreement with the generation vendor, a documented retention policy, and a review of whether the vendor trains on your prompts. GDPR, CCPA, HIPAA (for health-adjacent), and sector-specific rules all apply — and the prompt itself is the regulated artifact.

The concrete checklist:

No personal data in prompts unless the workflow has a lawful basis, a data-processing agreement, and a deletion path. Default answer: don't put PII into prompts.

Customer segments aren't prompts. Don't paste PII-adjacent segment descriptions into third-party models. Use aggregated segment labels only.

Prompt retention policy. How long are prompts and outputs stored, by whom, accessible to whom. Document it and enforce it.

Vendor review. Does the generation vendor train on your prompts? If yes, either contractually disable it or don't send sensitive content. Most enterprise vendor agreements allow opt-out; confirm per vendor.

Cross-border transfer. EU data hitting a US-hosted model is a standard contractual clauses / adequacy decision question. Map your data flows before shipping.

Audit access. Can privacy counsel retrieve all prompts touching a specific subject within a reasonable period? If no, the workflow is not GDPR / CCPA compliant.

IAB Europe's 2026 guide has the most detailed vendor-review template. The common failure is treating prompts as throwaway text; regulators are increasingly treating them as processing activities subject to the same rules as any other data processing.

What should the audit trail contain per asset?

The audit trail is the evidence base when a regulator, a competitor, a consumer, or internal audit asks "why did this run." The 2026 standard is per-asset logging of prompt, model and version, brand spec version at approval time, reviewer identity, approval timestamp, and legal sign-off where applicable. Retention minimum 24 months for general advertising; up to seven years in regulated categories.

Per approved asset, store:

Prompt and model/version. The exact input; the exact model and version that produced it. Immutable, signed, timestamped.

System-prompt version. Brand guidelines and guardrails at time of generation — they change, and old assets should be defensible against the rules that were in effect when they shipped.

Brand spec version at time of approval. Tone, claim library, visual guidelines snapshot.

Reviewer identity and approval timestamp. Who signed off, when, and on what rubric.

Legal/compliance reviewer sign-off where applicable. For regulated categories or high-risk assets.

Measurement experiment cell and campaign ID. Tie-back to where the asset actually ran.

Post-publish surface log. Where the asset appeared, including AI-answer citations where the brand was cited.

If a regulator or internal audit asks "why did this run," the answer has to exist. Teams that retrofit audit trails after an incident usually can't. The technical implementation is modest — a structured log with signed records — but the organizational discipline to log every asset, every time, is where programs typically fail.

The checklist in one table

Domain | Owner | Must-have control | Review cadence | Retention |

|---|---|---|---|---|

Disclosure | Legal + Brand | Disclosure rules by category, applied per asset | Per asset | 24+ months |

Rights | Business Affairs | Likeness, voice, music, provenance | Per campaign | 7+ years |

Claims | Regulatory / Medical / Finance | Approved claim library, substantiation link | Per asset | 24+ months |

Data | Privacy / Security | Prompt policy, vendor review, cross-border map | Quarterly | Per policy |

Audit trail | Creative Ops | Prompt + reviewer + version per asset | Per asset | 24+ months, 7+ in regulated |

In 2026, the brands that treat compliance as an operational discipline — named owners, per-asset checks, logged trails — ship faster, not slower. Compliance-as-afterthought is how campaigns get pulled post-launch; compliance-as-checklist is how campaigns survive scrutiny and renew.

Adopting this table as-is gives a working team somewhere to start. Most brands will adapt the ownership column to their org structure; the domains, controls, and cadences are the table stakes.

How do you actually operationalize the checklist?

You operationalize the checklist by embedding each domain into the existing creative-ops workflow rather than creating a parallel compliance process. That means the creative management system, the DAM, and the approval tool each carry the checklist fields; every approval step writes to the audit log; and the check isn't complete until all five domains return green. IAB Europe's 2026 guide includes reference integrations for the major creative-ops platforms.

A practical implementation path:

Map the checklist to your approval tool. Whatever your team uses for creative review — Frontify, Bynder, Aprimo, Adobe Workfront — add fields for each domain's check.

Embed disclosure and audit-trail logging in the generation pipeline. Every generated asset receives a tag the moment it's produced; the tag travels with the asset.

Make the claim library retrieval-backed. The model can only draw from approved sources; deterministic filters catch claim-pattern language that requires pre-clearance.

Schedule the quarterly data review. Calendar-driven, not event-driven. Privacy counsel reviews prompt flows, vendor training posture, and cross-border transfers every quarter.

Run a tabletop exercise quarterly. Simulate a regulatory complaint or consumer complaint. Confirm the evidence is retrievable within the required window.

Teams that operate this way typically add 2–4% to creative-ops cost and reduce incident-response cost by 60–80%. Ad Age's 2026 reporting documented several large advertisers whose total compliance cost fell after they standardized the checklist — because the before-state was reactive firefighting.

What are the biggest 2026 checklist mistakes?

The biggest 2026 checklist mistakes are treating compliance as a one-time campaign review, under-owning the prompt-data domain, assuming the model vendor handles substantiation, and failing to log audit trails for assets that "felt low-risk." Each of these shows up repeatedly in enforcement actions and post-mortems.

Specific recurring failures:

"Legal signs off once at the start." They can't. Generative output drifts; review must be per asset or per cell, not per campaign. This is the #1 issue surfaced by IAB Europe's 2026 guide.

"Disclosure kills performance." Well-placed disclosure is a non-issue for most audiences. Hidden AI use caught later is the real performance problem.

"The model's vendor handles compliance." They do not. The brand is the advertiser of record and the regulatory target.

"We don't need rights for synthetic talent." You might — depending on whose likeness the synthetic resembles, and what jurisdiction.

"We'll build the audit trail if we ever need it." Retrofitted trails don't hold up. The evidence is only useful if it was captured at the moment of generation.

"Prompts aren't personal data." Often they are. Customer descriptions, segment labels with PII-adjacent attributes, and internal notes all can qualify as regulated data.

What's changing in the regulatory landscape?

Two regulatory shifts are live through 2026: the EU AI Act advertising implementation notes are tightening disclosure and provenance expectations, and the FTC is explicitly including AI-generated claims in its enforcement discretion. WARC's 2026 Global AI Advertising Regulation Tracker adds national-level rules in the UK, Singapore, Brazil, Australia, Canada, and Germany. Brands without an audit trail in place will be caught short.

What's coming between mid-2026 and 2028:

EU AI Act enforcement ramps. Systemic-risk provisions and transparency rules translate into specific penalty frameworks for advertising.

FTC enforcement actions test the 2025 guidance. Expect the first precedent-setting cases to land by late 2026.

Machine-readable provenance standards become default. C2PA content credentials and IAB Tech Lab tags move from voluntary to expected across the supply chain.

Sector-specific rules layer on top. Pharma, finance, and children's advertising in several jurisdictions are adding AI-specific provisions.

Civil litigation from rightsholders scales. Training-data claims against model vendors route, eventually, to brand customers of those vendors in some theories of liability.

ANA's 2026 update specifically recommends quarterly counsel review of the regulatory map. The direction is toward more specificity and more enforcement, not less. The checklist is the practical mechanism for keeping pace.

How to act on this checklist

Name owners for each of the five domains this week. Build the claim library and disclosure rules next. Stand up prompt-and-approval logging inside the creative ops tool. Run the checklist on one campaign end-to-end before scaling it. Schedule the quarterly data review. Run a tabletop exercise. None of this is exotic — it is the operational discipline that turns compliance from a one-off legal memo into a ship-faster advantage.

A 30-60-90 operational plan:

Days 1–30. Name owners per domain. Document existing procedures or explicitly gap-flag the domain as missing. Choose one pilot campaign for end-to-end checklist application.

Days 31–60. Build or buy the claim library and substantiation linkage. Implement per-asset audit logging in the creative-ops tool. Stand up disclosure tagging.

Days 61–90. Run the pilot campaign through the full checklist. Publish the post-mortem internally. Scale to all campaigns. Schedule the quarterly data review and the tabletop exercise.

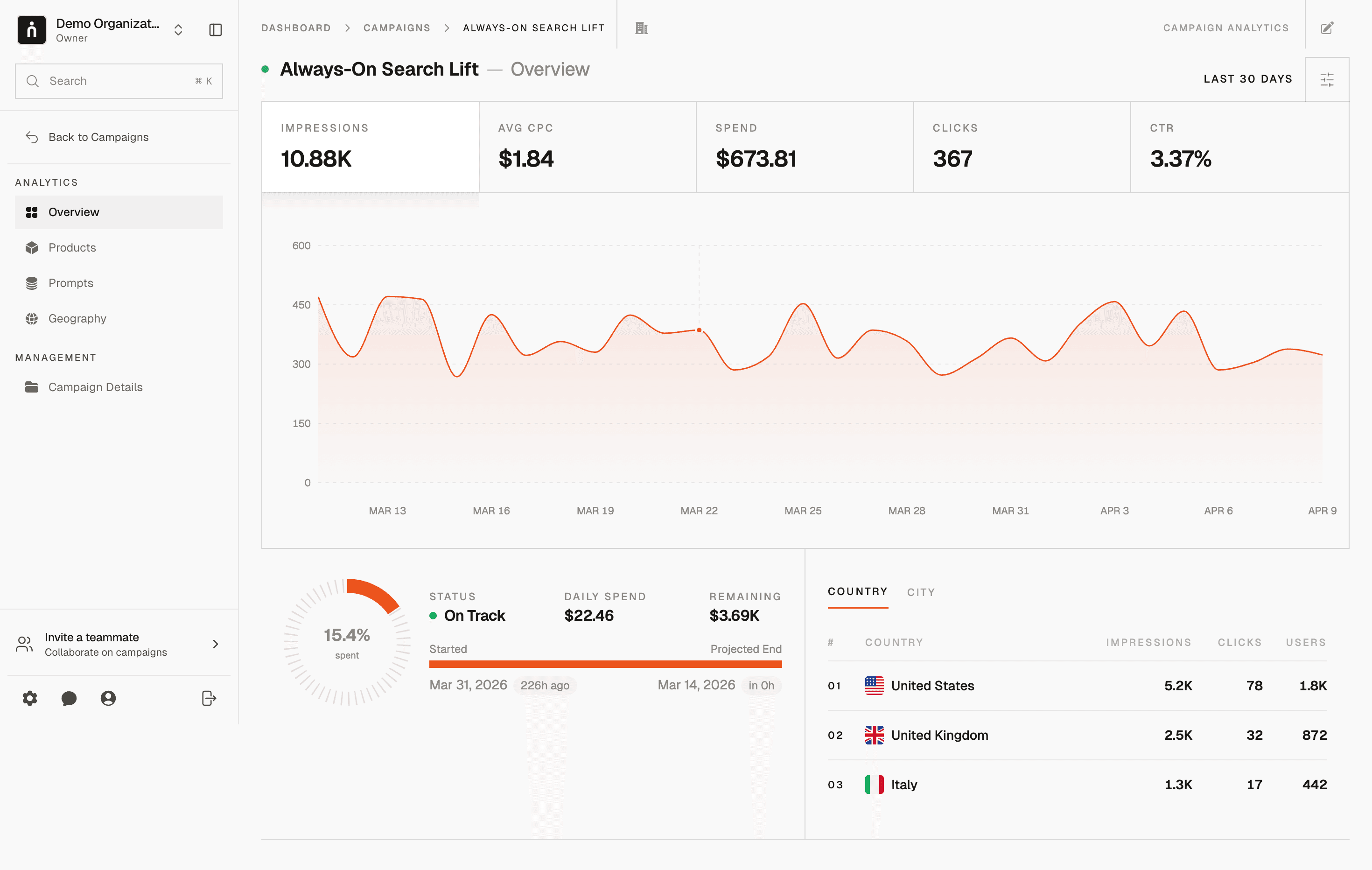

Thrad's measurement layer produces the generative-surface placement record that slots directly into the audit trail — so when someone asks where your brand appeared inside an AI assistant last quarter, the answer is documented. The checklist is the discipline; the logs are the evidence; the cadence is what keeps the system alive. Teams that operate all three keep pace with a regulatory environment that keeps getting more specific.

ai ad compliance, generative ai ad disclosure, ai advertising regulation, ai ad brand safety

Citations:

IAB Tech Lab, "Generative AI in Advertising Standards v1," 2026. https://iabtechlab.com

FTC, "Guidance on AI-Generated Advertising Claims," 2025. https://ftc.gov

WARC, "Global AI Advertising Regulation Tracker," 2026. https://warc.com

Ad Age, "The New AI Ad Compliance Stack," 2026. https://adage.com

EU AI Office, "Advertising Implementation Notes for the AI Act," 2026. https://digital-strategy.ec.europa.eu

IAB Europe, "Generative AI Advertising Compliance Guide 2026," 2026. https://iabeurope.eu

ANA, "Legal and Regulatory Update for AI Advertising," 2026. https://ana.net

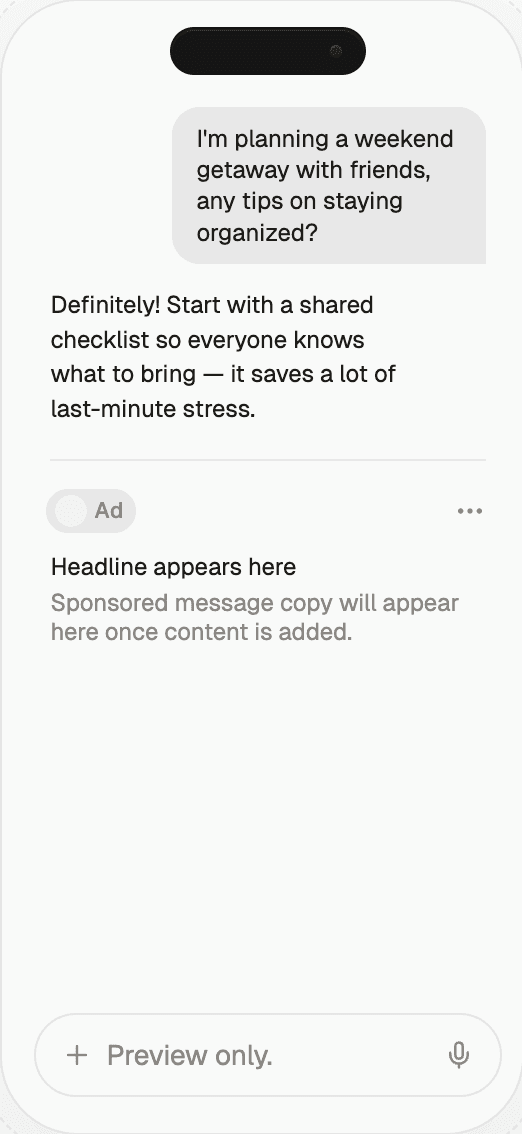

Be present when decisions are made

Traditional media captures attention.

Conversational media captures intent.

With Thrad, your brand reaches users in their deepest moments of research, evaluation, and purchase consideration — when influence matters most.

Date Published

Date Modified

Category

Advertising AI

Keyword

generative ai advertising compliance checklist