A useful generative AI advertising case study names the baseline,

shows the measurement cut, discloses the human-in-the-loop cost, and

defines "AI contribution" clearly. WARC's 2026 case-study standards

review found roughly 68% of published generative AI cases fail at

least two of those tests. Brands that build honest cases — even

smaller, less flashy ones — get renewal and internal funding; brands

leaning on flashy numbers without measurement discipline lose trust

on the second pilot.

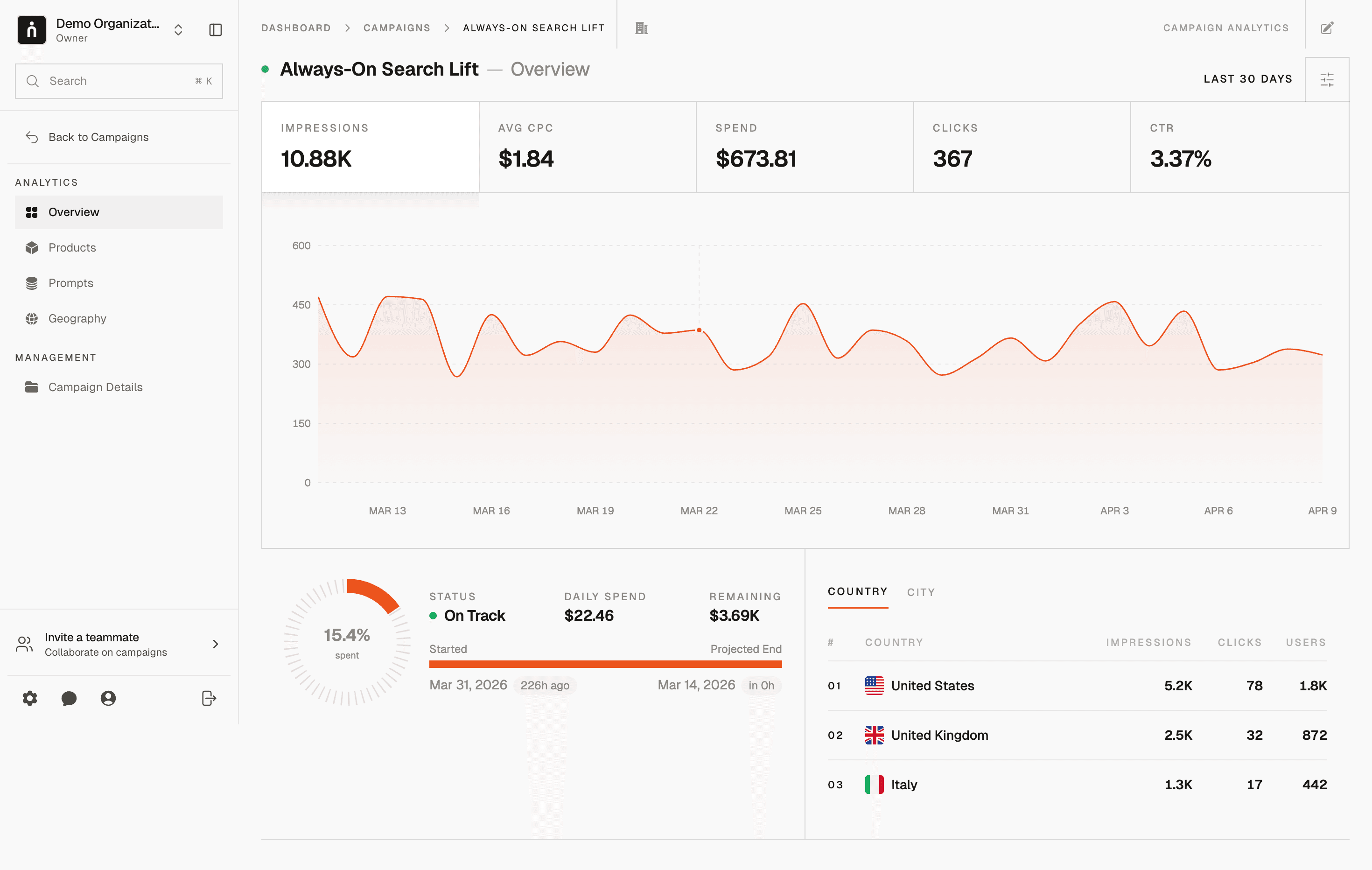

Start monetizing your AI app in under an hour

With Thrad, publishers go from first API call to live ads in less than 60 minutes. With fewer than 10 lines of code required, Thrad makes it easy to unlock revenue from your conversational traffic the same day.

Generative AI Advertising Case Studies | Thrad

Most 2026 generative AI advertising case studies overstate lift,

understate cost, and skip the baseline entirely. This is a teardown

framework for reading them critically — and a template for building

ones that survive procurement, finance, and in-house counsel review.

Generative AI advertising case studies flooded trade press through 2025 and early 2026. Most of them don't hold up to a careful read. WARC's 2026 case-study standards review found roughly 68% of published cases fail at least two of four basic credibility tests. This article is a framework for tearing down a case study and a template for building ones that survive scrutiny — whether you're a brand evaluating a vendor pitch or a practitioner trying to make a real internal case.

What should a generative AI advertising case study actually do?

A generative AI advertising case study should let a reader evaluate whether the claimed result would generalize to their own program. That requires answers to five questions: what did the brand do before AI, exactly where AI sits in the pipeline, what the measurement method was, what the human-in-the-loop cost was, and what was killed. Any case missing more than one of these belongs in the marketing folder, not the investment memo.

Unpacking each:

What did the brand do before AI? The baseline. Without this, a percentage lift is just a number. Campaign magazine's 2026 teardown series shows 42% of published cases skip or mis-specify the baseline.

Exactly where does AI sit in the pipeline? Creative, targeting, placement, measurement, or some mix. "We used AI" is not an answer; "We replaced manual variant production with Model X for 47 creative variants while holding audience targeting and budget constant" is.

What was the measurement method? A/B test, geo hold-out, matched markets, ghost ads, or converted lift. Each has different strengths; all require disclosure.

What was the human-in-the-loop cost? Prompt engineering time, review cycles, compliance review, revisions. Ad Age's 2026 reporting suggests typical unseen labor adds 30–120% to the pre-AI production cost.

What was killed, and why? High kill rates (30–70% of generated variants) are a sign of healthy governance, not failure. Zero kill rate is a warning.

A case that answers all five can be wrong but useful. A case that answers fewer than three has built its conclusion on evidence no buyer can verify.

The five teardown patterns

Pattern 1 — The missing baseline

Headline: "AI creative lifted CTR by 47%." Fine print: no control, no previous-period match, no mention of audience or budget changes. This is the single most common failure mode. Without a baseline, any percentage is just a number. WARC's 2026 review found 62% of published cases either omit the baseline or describe it so vaguely that the comparison can't be reconstructed.

Pattern 2 — The hidden human cost

Headline: "We produced 500 variants in a week with AI." Unmentioned: three senior creatives, two prompt engineers, and a compliance reviewer spent that week gating output. The true cost per approved variant was a reasonable number — but nothing close to "AI did it for free." Ad Age's 2026 reporting documented cases where the hidden labor was more expensive than the pre-AI production it replaced.

Pattern 3 — The scope creep

Headline: "AI campaign delivered 3x return." Read closer: the campaign mixed new creative, new audiences, new placements, and new promotion together. Which variable mattered? Impossible to say. Useful case studies hold every variable except AI constant — which usually requires a smaller, more boring test than a brand-lift story wants to be.

Pattern 4 — The cherry-picked window

Headline: "Sustained lift over eight weeks." Actual data: a strong first two weeks, back to baseline by week six, selectively reported. Honest case studies report the full tail and the decay curve. MMA Global's 2026 incrementality work shows generative AI creative decay curves are sometimes steeper than traditional creative — not always, but often enough that honest reporting matters.

Pattern 5 — The platform-only view

Headline: "ROAS up 2.4x." Missing: incremental lift measured outside the platform that is also the one selling the ad units. Self-reported platform ROAS and truly incremental ROAS are not the same number. IAB Tech Lab's 2026 attribution practices document explicitly names this as a known gap in published case studies.

Compare the five patterns

Pattern | What's missing | Good-case fix | Prevalence in WARC 2026 review |

|---|---|---|---|

Missing baseline | Control group or matched period | Named baseline, pre-registered control | 62% |

Hidden human cost | Labor accounting | Cost per approved asset, all-in | 54% |

Scope creep | Variable isolation | Hold every variable but AI constant | 41% |

Cherry-picked window | Decay curve | Full eight- or twelve-week tail | 37% |

Platform-only view | Independent measurement | Third-party incrementality cut | 46% |

The patterns overlap. A single weak case study typically exhibits two or three of them, which is why WARC's 2026 review could only clear ~32% of published cases as credible on basic tests.

Read every 2026 generative AI advertising case study with five questions in the margins: baseline, AI scope, measurement method, human cost, kill rate. If more than two are unanswered, the case doesn't support the conclusion — even if the numbers look impressive.

How do you tell a credible case from a vendor press release?

You tell a credible case from a vendor press release by checking for three disclosure markers: a named measurement partner independent of the platform running the campaign, a pre-registered baseline and method, and explicit human-in-the-loop cost accounting. Press releases almost never include all three because each one constrains the headline number the vendor wants to publish. Credibility is in the fine print; marketing impact is in the headline.

A practical read-through order for any case study:

Look at the methodology box first, not the headline. Is there an independent measurement partner? A pre-registered method? A named control?

Scan for labor disclosure. How many hours of prompt engineering, review, and compliance? If silent, assume the unreported labor inflated ROI by 30–120%.

Find the decay curve. If only a peak is shown, assume the curve drops off faster than the report suggests.

Check the AI scope description. "AI-powered" and "AI-optimized" are marketing terms. A credible case names the model, the pipeline step, and what human review gated the output.

Then look at the headline number — with context from the above four.

Campaign magazine's 2026 teardown series and WARC's standards work both follow this order. Buyers running serious procurement processes follow it too.

A template for building a credible case

The template that survives 2026 procurement has seven short sections and runs under 1,000 words. Written honestly, a credible case study lands between 700 and 950 words; the pressure to pad usually signals weaker evidence rather than more evidence. Buyers have become allergic to 3,000-word case studies with 50 words of method and 2,950 of atmospherics.

The seven sections:

Context. Brand, category, goal, timing. Two to four sentences.

Baseline. What we were doing before AI, measured the same way. A sentence on the control design.

AI intervention. Which step — creative, localization, placement, measurement — AI touched. What human review gated it. Named model and version.

Method. Geo hold-out, matched markets, ghost ads, or A/B. Named, pre-registered where possible.

Result. Lift vs. baseline, full decay curve, cost per approved asset, human-labor accounting.

Kill rate and learnings. What didn't work, what was pulled, what we'd change.

Replication cost. What another brand would need to reproduce the result.

What does a 2026 case-study rubric look like?

A 2026 case-study rubric scores a case on baseline integrity, method disclosure, AI scope specificity, labor accounting, and independent measurement presence — ideally on a five-point scale each, with credibility thresholds published. WARC's 2026 standards work proposed exactly this rubric; Campaign magazine's teardown series uses a simplified four-point version. Both are freely available and worth adopting internally.

An example five-dimension rubric:

Dimension | 1 (poor) | 3 (acceptable) | 5 (strong) |

|---|---|---|---|

Baseline integrity | Unnamed run-rate | Named prior period | Pre-registered control with matched audience |

Method disclosure | "A/B test" unnamed | Named method, brief | Named method, pre-registered, tool stack disclosed |

AI scope specificity | "AI-powered" | Named pipeline step | Model, version, human review gate, kill rate |

Labor accounting | None | Total hours | Hours by role with unit economics |

Independent measurement | Platform-only | Platform + named verifier | Independent incrementality by third party |

A case at 3.0 average or better is usually worth reading in detail. A case below 2.5 is press release. A case at 4.0+ is publishable-as-evidence in a procurement process. Applying the rubric to every case you encounter — vendor pitches, trade press coverage, industry awards submissions — sharpens the ability to spot weak evidence fast.

Why do credible cases actually win renewals?

Credible cases win renewals because procurement and finance teams remember. ANA's 2026 benchmark measured generative AI pilots supported by cases meeting the four-point credibility test and found they renew at 74%, versus 29% for pilots with weaker cases. Finance teams keep records, and the second-round procurement process penalizes vendors whose first-round cases fell apart under scrutiny.

Three specific mechanisms:

Procurement memory. Buyers who ran an AI pilot in 2024–2025 remember which cases held up. Vendors with weak cases lose RFP shortlists for the 2026 cycle.

Internal funding. The brand's own case study is the evidence used to request expanded budget. A case that survives an internal CFO review is what funds pilot 2 and pilot 3.

Industry reputation. Case studies in trade press compound — good ones set category pricing; weak ones set skepticism.

A credible, small, honest case study is worth more than a flashy unverified one. Finance teams remember — and so do the procurement officers who now keep a running ledger of which vendors' case studies survived teardown.

The asymmetry is real. A case study that overstates lift by 50% can cost the vendor the next renewal cycle and the next three RFPs. A case study that understates lift by 10% costs nothing and builds the trust that accelerates the next sale.

Common misconceptions

"Bigger numbers are more persuasive." In 2026 they're the opposite — reviewers treat "10x lift" as a red flag unless the method is airtight. ANA's benchmark explicitly notes this inversion.

"Case studies should be marketing assets first." They should be evaluation assets first. Good marketing follows from credible evidence; shortcutting evidence destroys marketing value on the second cycle.

"Only hero campaigns deserve a case study." Small, narrow, boring wins — a retail-media feed, a set of localized variants — produce the most reusable evidence. Campaign magazine's 2026 teardown series specifically highlighted "boring and replicable" as the strongest category.

"Platform dashboards are enough for a case study." They aren't. Platform-only measurement has an unavoidable conflict of interest that buyers now discount.

"Case studies need to cover a full year." They don't. A clean four- to eight-week test with a real baseline beats a sprawling annual narrative.

"The vendor writes the case; the brand approves." In 2026 the credible brands write their own and ask the vendor to verify. Writing a case study is a competitive skill.

What's changing in the case-study market?

Two shifts are visible, both of which pressure vendors toward honesty. First, trade press outlets are starting to gate case study coverage on method disclosure, which will weed out the weakest cases. Second, procurement and in-house counsel are asking for case-study-style evidence before signing AI tooling contracts, raising the bar on what brands accept from vendors. ANA's 2026 benchmark specifically recommends requiring the five-dimension rubric as part of RFP scoring.

Three specific market shifts through 2026 and into 2027:

Gated trade press. WARC, Campaign, and Ad Age have all signaled they will require minimum method disclosure for case-study publication starting mid-2026.

Procurement ledgers. Large buyers now maintain internal records of which vendors' cases held up under teardown. This data is shared informally across peer buyer networks.

Industry awards shift criteria. The major creative awards are adding effectiveness categories that require independent measurement evidence. Effie Awards, Cannes, and the ANA Genius Awards all have announced updates.

The direction is consistent: flashy-but-unsubstantiated wins are devaluing fast. Brands and vendors that built case-study rigor in 2024–2025 have a compounding advantage in 2026; those still relying on press-release cases will find the returns declining year over year.

How to build a case study that survives review

Pick a narrow scope. Pre-register your baseline and measurement method before the campaign runs. Track the human-in-the-loop cost weekly during the pilot. Report the decay curve alongside the peak. Name the measurement partner. Use the five-dimension rubric above as a self-check before publishing — a sub-3.0 score is a warning that the case won't survive teardown.

A checklist for the practitioner:

Before the campaign. Baseline documented and verified. Measurement method chosen and pre-registered. AI scope defined in writing — which step, which model, which human gate.

During the campaign. Weekly time-tracking by role on human-in-the-loop labor. Kill rate logged per batch. Early-signal decay monitoring.

After the campaign. Full-tail decay curve reported. Independent measurement cut included. Labor cost rolled into cost-per-approved-asset. Honest "what we'd change" section.

Thrad's measurement layer produces the placement and generative-surface cut that most 2026 case studies are missing — so when your finance team asks "was this incremental to search and social?" the answer is already in the results table. Credible cases aren't harder to write than press-release cases; they're just written differently, with evidence that anticipates the teardown instead of hiding from it.

ai advertising case study, generative ai campaign case study, ai ad results, ai marketing case studies

Citations:

WARC, "Measurement in AI Advertising: Case Study Standards," 2026. https://warc.com

IAB Tech Lab, "Attribution Practices for Generative Campaigns," 2026. https://iabtechlab.com

Ad Age, "Why AI Case Studies Are Failing Finance Review," 2026. https://adage.com

Campaign, "The Anatomy of a Credible AI Ad Case," 2026. https://campaignlive.com

ANA, "Generative AI Marketing Effectiveness Benchmark," 2026. https://ana.net

MMA Global, "Incrementality Measurement in AI Advertising," 2026. https://mmaglobal.com

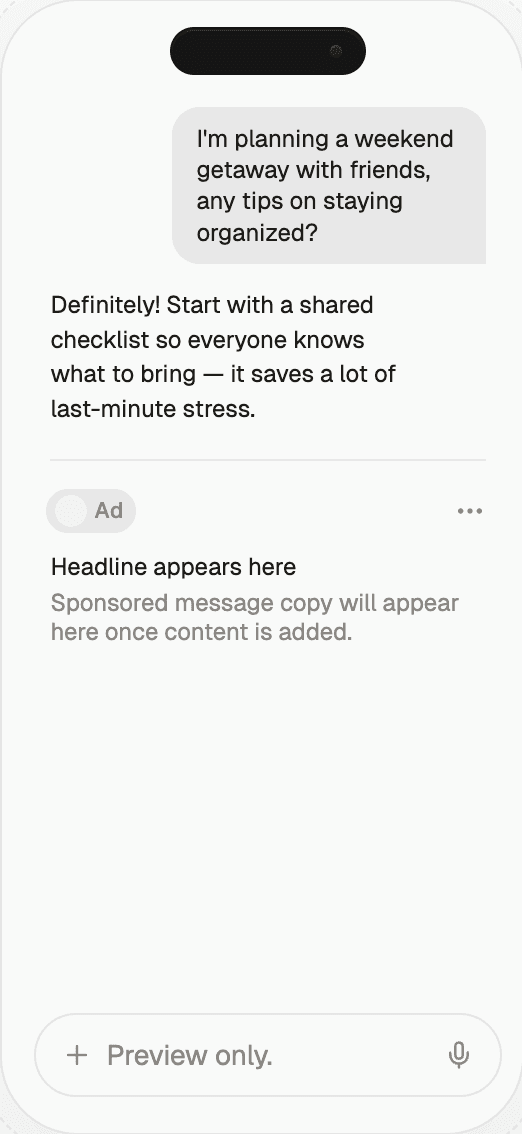

Be present when decisions are made

Traditional media captures attention.

Conversational media captures intent.

With Thrad, your brand reaches users in their deepest moments of research, evaluation, and purchase consideration — when influence matters most.

Date Published

Date Modified

Category

Advertising AI

Keyword

generative ai advertising case studies