In 2026 ChatGPT consumer subscriptions (Plus + Pro) generate roughly

$4.3B annualized versus the OpenAI API's $2.8–3.2B, but the API has

broader reach and funds most of the AI-native application layer.

Subscriptions carry higher gross margin per dollar (~70% vs ~65%

blended); the API carries more strategic surface area. Growth rates

are converging as consumer subs plateau (~18% YoY) and API pricing

compresses even as token volume triples.

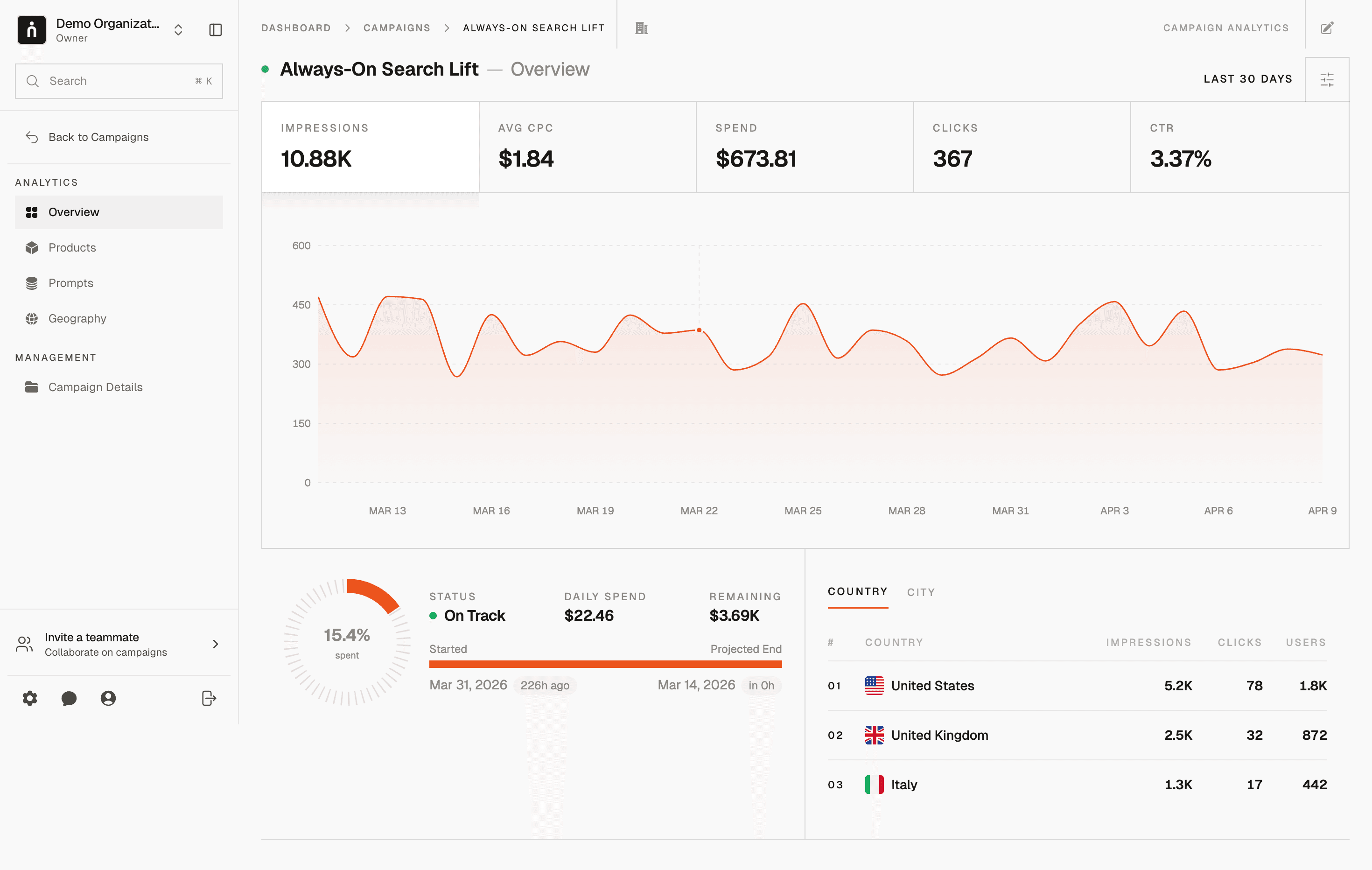

Start monetizing your AI app in under an hour

With Thrad, publishers go from first API call to live ads in less than 60 minutes. With fewer than 10 lines of code required, Thrad makes it easy to unlock revenue from your conversational traffic the same day.

ChatGPT Subs vs API Revenue 2026 | Thrad

ChatGPT's consumer subscription line and OpenAI's API line are often

bundled together in analyst decks, but they behave like different

businesses. One is a consumer product with a plateauing addressable

market; the other is a developer-infrastructure product with ongoing

pricing pressure. Here's how they compare in 2026 and why the mix

matters.

ChatGPT consumer subscription revenue and OpenAI API revenue behave like

different businesses despite running on the same underlying models. In

2026, subscriptions are still the bigger absolute line at roughly $4.3B

annualized, the API at $2.8–3.2B has broader reach across the AI

ecosystem, and the two are converging in dollar growth rate while

diverging sharply in margin profile. For brands, developers, and

investors trying to decide where OpenAI's business is going, the

subs-versus-API split is the single most useful cut of the revenue

picture.

What is the difference between ChatGPT subscription and API revenue?

ChatGPT subscription revenue comes from consumers paying a flat monthly

fee — $20/mo for Plus, $200/mo for Pro — for access to the ChatGPT app

at chatgpt.com and its mobile and desktop clients. API revenue comes

from developers and businesses paying per-million-token for programmatic

access to GPT-4o, GPT-5, o1, o3-mini, embeddings, DALL-E, Whisper, and

specialized endpoints. One is a subscription SaaS product with a human

in front of it; the other is a metered infrastructure product exposed

through REST endpoints, SDKs, and the OpenAI Python/Node libraries.

They share models, but not economics, not buyer personas, and not

growth dynamics.

The confusion comes from analyst decks that bundle them as "OpenAI

revenue." Treating them as one line obscures three things: subs and

API have different gross margins, they have opposite exposure to

inference-cost deflation, and they serve different cohorts who respond

differently to product changes. This article separates them cleanly.

How big is each line in 2026?

Consumer subscriptions still contribute more absolute dollars in 2026.

ChatGPT Plus has an estimated 15–17 million active subscribers at

$20/mo — roughly $3.6–4.0B annualized — and Pro adds $600–720M at an

estimated 250k–300k subscribers paying $200/mo. Together they produce

around $4.3B annualized, which press reporting places as the largest

single category within OpenAI's total ~$12B run-rate at Q1 2026.

The API is the second-largest line, estimated at $2.8–3.2B annualized

at the same reference point. It funds the AI-native startup ecosystem

— coding assistants (Cursor, Windsurf, Cognition), customer-support

platforms (Intercom Fin, Sierra, Decagon), content pipelines,

voice-agent products — and powers most of the "AI features" inside

incumbent SaaS products (Notion AI, Zendesk Copilot, Salesforce

Einstein). Aggregate token volume is enormous and growing roughly 3×

year-over-year; per-token pricing keeps falling, which is why dollar

growth lags volume growth by a wide margin.

Dimension | ChatGPT subs (Plus + Pro) | OpenAI API |

|---|---|---|

Est. 2026 annualized revenue | ~$4.3B | $2.8–3.2B |

Customer count | ~15–17M (Plus) + ~250–300k (Pro) | ~1M+ paying accounts |

Average revenue per customer | $240 (Plus) / $2,400 (Pro) | Wide distribution; median ~$60/yr, long-tail to $10M+/yr |

Pricing | Flat monthly ($20 / $200) | Per-million-token, model-specific |

Compute cost exposure | Capped by rate limits | Directly pass-through |

Gross margin per dollar | 60–75% (Plus), 80–85% (Pro) | 55–75% blended |

Churn dynamics | Consumer churn patterns, ~4–6%/mo | Developer workload shifts, harder to quantify |

Upside per customer | Limited by tier cap | Unbounded with usage |

Figures are directional, sourced from The Information, Stripe's State

of AI Monetization 2026, and SemiAnalysis.

Why do the two lines have different margins?

Subscription margins are structurally higher because the pricing is a

flat fee against rate-limited usage. A Plus subscriber has a hard cap

on how many GPT-4o messages per three-hour window they can send; that

cap pins the maximum compute cost per subscriber per month at roughly

$15–20 for a heavy user and closer to $4–6 for an average user. At

$20/month revenue, the spread is real. For Pro at $200/month, the

spread is dramatic — even the heaviest Pro users rarely exceed

$60–80/month in compute cost because of how the reasoning-model rate

limits are structured.

API margins sit lower because pricing is pass-through on compute.

OpenAI prices each model at a markup over its own inference cost, and

that markup has compressed steadily as Anthropic, Gemini, and

open-weight models (Llama, Mistral, DeepSeek) price competitively

below GPT-4o and GPT-5 on comparable benchmarks. Net API gross margin

blended across models is 55–75%, with the higher end on cached prompts

and batch calls and the lower end on real-time frontier-model

inference.

Subscription revenue is mostly a function of addressable market, while

API revenue is mostly a function of how cheap inference gets. One

saturates; the other compounds with the AI-native app ecosystem. The

result is that subs are a higher-margin, lower-growth business inside

the same company that runs a lower-margin, higher-growth platform.

How fast is each line growing in 2026?

Consumer subscriptions are growing slowly by OpenAI's standards. The

cohort of people willing to pay $20/mo for a general-purpose AI

product is maturing; Plus subscriber growth has decelerated from

doubling-per-year in 2024 to roughly 18% year-over-year at Q1 2026.

Most new-subscriber volume in 2026 comes from international expansion

(Southeast Asia, Latin America, India's urban tier-1 cities) and

Pro-tier upsells rather than net-new US Plus subscribers. Pro itself

is growing faster — an estimated 90% year-over-year — but off a

smaller base.

API revenue is still compounding, but at a rate that reflects two

offsetting forces. Usage volume is growing quickly — aggregate

token throughput is up roughly 3× year-over-year as AI-native apps

proliferate and incumbent SaaS products light up AI features. Per-

token pricing keeps falling — GPT-4o input tokens dropped from $5/M

to $2.50/M in the reference period, a 50% cut, and similar cuts

landed on output tokens and embeddings. Net-net, API dollar revenue

is growing at ~60% year-over-year, far slower than token volume but

far faster than subscriptions.

Line | Volume growth YoY | Price change YoY | Dollar revenue growth YoY |

|---|---|---|---|

ChatGPT Plus | ~18% (subscribers) | Flat ($20/mo unchanged) | ~18% |

ChatGPT Pro | ~90% (subscribers) | Flat ($200/mo unchanged) | ~90% |

OpenAI API | ~200% (token volume) | ~-45% (per-token) | ~60% |

The divergence between API volume growth and API dollar growth is the

single most important number in the OpenAI revenue story. It is the

quantitative signature of a platform maturing.

What drives the differences in customer behavior?

Subscription customers and API customers behave like different species.

A Plus subscriber uses ChatGPT through the app, hits rate limits, rarely

thinks about token counts, and churns if the product feels stale or

the competition looks more useful. Churn patterns look like a premium

consumer app — roughly 4–6% monthly, with net retention supported by

product updates (voice mode, Canvas, Operator) that make each month

feel like progress.

An API customer is a developer or a company. They care about

milliseconds of latency, consistency of JSON output, function-calling

reliability, context-window limits, rate-limit tiers, and dollar cost

per call at scale. They do not churn in consumer patterns — they

migrate workloads, which happens gradually and often partially. A

large AI application company might run 90% of tokens through OpenAI

in Q1 and 70% in Q4 after standing up a secondary provider (typically

Anthropic) for redundancy and for price leverage in contract

renegotiation.

A Plus subscriber churns. An API customer reshapes their workload

mix. The first is a light-switch; the second is a dimmer. That

single difference explains why subscription revenue is more

volatile month-over-month while API revenue is more volatile

quarter-over-quarter with bigger deals swinging the totals.

What is the strategic role of each line?

Subscriptions are OpenAI's direct consumer relationship and the

single biggest brand asset in AI. Plus and Pro give OpenAI a 15M+

paying-customer base it can ship features to, sell add-ons into (voice

mode upgrades, longer context, agent capabilities), and eventually

layer advertising and commerce onto. The strategic role is not revenue

per dollar — it's distribution for every product OpenAI will ship next.

The API is OpenAI's platform position and the entry point for the

entire AI-native app economy. Every AI product in 2026 makes a choice

about which model to build on; OpenAI wants to be the default. The

strategic role isn't margin; it's gravity. Margin can compress to

zero on the API and OpenAI still wins if API gravity keeps ChatGPT at

the center of the ecosystem.

These roles explain a lot of product decisions that look strange if

you focus only on the P&L. OpenAI aggressively cuts API prices because

platform gravity outweighs per-token margin. OpenAI doesn't cut

subscription prices because subscription margin funds the consumer

product roadmap. The two pricing strategies look contradictory unless

you read them as different levers for different outcomes.

How do the two lines reinforce each other?

It's tempting to frame subs and API as competing for OpenAI's

attention. They don't. The two lines reinforce each other through

three channels.

First, brand pull. ChatGPT's consumer visibility is the single

biggest driver of API adoption — when a developer picks a model

provider for a new AI feature, "we use OpenAI" is a more intelligible

story to their boss and their users than "we use Anthropic" or "we

use Mistral." OpenAI's subscription-side product marketing is API

marketing for free.

Second, feature pipeline. API capabilities (function calling, the

Assistants API, Realtime audio, tool use, structured outputs) often

ship on the API first and then get wrapped into ChatGPT consumer

features. Subscribers benefit from the investment in API primitives;

developers benefit from the validation loop in ChatGPT.

Third, talent and data gravity. The ecosystem of developers

building on the API generates edge-case data, bug reports, and

integration patterns that feed back into model training. That same

gravity makes ChatGPT the research frontier for what subscribers can

ultimately do. OpenAI's best product decisions at the subscription

layer are informed by what developers are already doing at the API

layer.

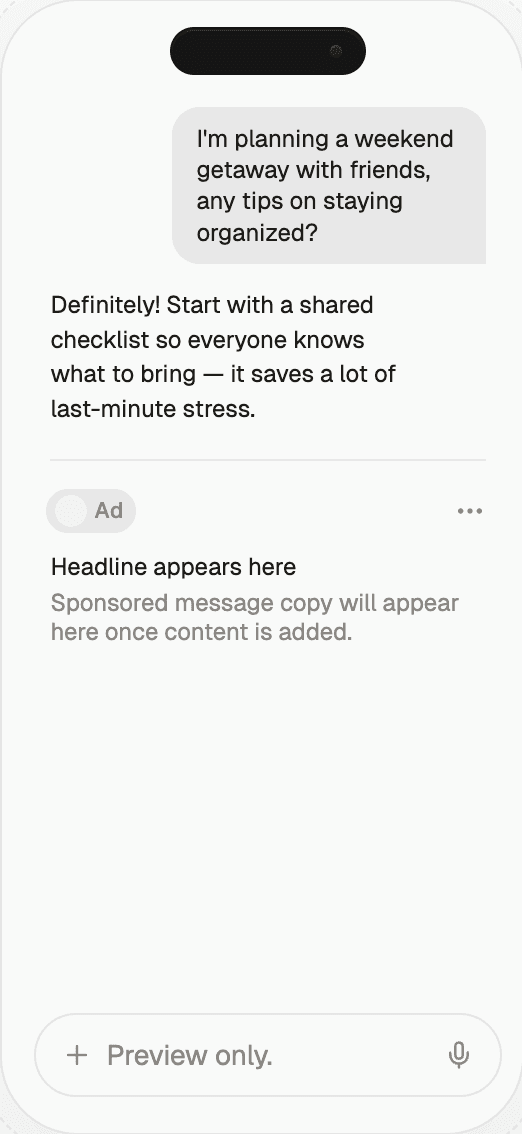

Why does this matter for brands?

For brand marketers, the sub-versus-API split affects two different

things. Subscription users are consumers sitting inside the

ChatGPT app — the audience that gets served sponsored search

placements, shopping recommendations, and (increasingly) commerce-

oriented suggestions. If you're planning paid inventory inside

ChatGPT, this is your audience. API users are developers and

companies building AI-native products where your brand might appear

as a recommended answer, a structured data source, or a licensed

content partner. If you want to show up as a citation inside a

ChatGPT-powered product, this is the surface that matters.

The practical upshot: a brand's AI-visibility strategy is not one

workstream, it's two. The content and structured data work that makes

your brand citable at the API layer is different from the ad and

commerce work that makes your brand present at the subscription

layer. Both surfaces matter; the tactics are not interchangeable.

Common misconceptions

"The API is just a cheaper version of ChatGPT." It's a different

product. The API has no app layer, no conversation memory by

default, no consumer search surface, no built-in tool use outside

the Assistants API — it's infrastructure. You build the app on top."Subscriptions are dying because power users switch to the API."

Not really, and the data doesn't support it. A minority of Plus

users ever touch the API; most stay on the app because they want the

product experience, not raw model access. Cannibalization is a

rounding error."API revenue is higher-margin than subs." False in 2026.

Per-token pricing compression has pulled API margins down relative

to subs. The reverse was briefly true in 2023; it hasn't been true

for two years."OpenAI will cannibalize the API with first-party apps." Possible

over time — Operator and Agents are pointed in that direction — but

in 2026 the API is a growing line, not a shrinking one, and OpenAI's

own product-sprawl strategy still needs a platform underneath."The two lines are about to merge." There's product-level

convergence (Canvas uses the same stack as the Assistants API, for

example), but the revenue lines are tracked separately and behave

differently. Analysts who collapse them will keep misreading the

growth curve.

What comes next?

Expect the subs-vs-API gap to narrow through 2026 and 2027. Consumer

subscriptions will plateau in dollar terms as Plus saturates US/EU

demand and Pro's small base can only grow so fast in absolute terms.

API usage will keep compounding as the AI-native app ecosystem

matures and as incumbent SaaS products ship deeper AI features.

Advertising revenue will increasingly blur the line — paid placements

inside ChatGPT are technically a consumer-surface phenomenon but

generate a revenue stream that behaves more like enterprise CPM than

like consumer subs.

The 2027 revenue mix will look structurally different from 2026's.

Three specific predictions worth tracking:

API will approach subscription revenue — not overtake, but

narrow the gap from $1B+ in 2026 to $200–400M by end of 2027.Enterprise seats will exceed both — compounding at ~80% YoY,

enterprise is on track to become the largest single line by late

2027 on current trajectory.Advertising will blur the consumer surface — subscription-line

ARPU will effectively include an ad-revenue component by end of

2027 that makes "subscription revenue" a less clean number than

it is today.

How to act on this as a brand

The practical takeaway: if your audience lives inside the ChatGPT app,

subscription-tier advertising and licensed-content placements are the

surface you need to invest in. If your audience builds or uses

AI-native products, you want your data, brand, and content to be

cited cleanly when those products query the API. Most brands in 2026

need both workstreams running in parallel.

On the subscription side, the mechanics are the familiar ones:

commercial-intent prompts, sponsored placement auctions, shopping

suggestion inventory, licensed feeds. On the API side, the mechanics

are less familiar and more technical: content that parses cleanly

into retrieval-augmented generation pipelines, structured data with

explicit attribution markers, authoritative third-party citations

that AI apps will surface as sources. Thrad helps brands show up in

both — measuring generative-surface presence and placing into

AI-advertising surfaces with integrity on the consumer side, while

making sure content is well-structured for API-driven citations on

the developer side.

chatgpt plus revenue, openai api revenue, chatgpt business model, chatgpt arpu

Citations:

OpenAI, "Pricing and Plans — ChatGPT and API," 2026. https://openai.com/pricing

The Information, "OpenAI consumer vs API revenue split," 2026. https://theinformation.com

Bloomberg, "API pricing compression across frontier model providers," 2026. https://bloomberg.com

Stripe, "State of AI Monetization 2026," 2026. https://stripe.com

SemiAnalysis, "GPU inference economics at scale," 2026. https://semianalysis.com

Be present when decisions are made

Traditional media captures attention.

Conversational media captures intent.

With Thrad, your brand reaches users in their deepest moments of research, evaluation, and purchase consideration — when influence matters most.

Date Published

Date Modified

Category

Advertising AI

Keyword

chatgpt subscription vs api revenue