Most AI chatbot apps will not survive on a single revenue model in 2026. Subscription alone monetizes roughly 2–5% of free users at typical freemium conversion rates, which is not enough to cover inference costs on the other 95%. The winning pattern for consumer chat apps is freemium-plus-ads — contextual ad inventory on the free tier funds the GPU bill while a paid tier keeps power users ad-free. Prosumer and B2B apps lean subscription-first. Usage-based pricing fits API-wrapping tools. Licensing is the dark-horse channel nobody talks about but several serious teams are already monetizing.

How to Monetize an AI Chatbot App (2026) — Thrad

You built an AI chatbot app. Now it has to pay for its GPUs. This is the 2026 pillar guide to every live monetization model — subscription, freemium plus ads, usage-based, licensing, affiliate — with honest economics per model and a decision matrix that tells you which one fits your product, stage, and audience.

Date Published

Date Modified

Category

Publisher Monetization

Keyword

how to monetize an ai chatbot app

Start monetizing your AI app in under an hour

With Thrad, publishers go from first API call to live ads in less than 60 minutes. With fewer than 10 lines of code required, Thrad makes it easy to unlock revenue from your conversational traffic the same day.

You built an AI chatbot app. The product works. Users engage. And every month, the inference bill gets bigger than the revenue line. This is the default failure mode of consumer AI apps in 2026: traction without economics. The fix is not a clever pricing page — it's a deliberate monetization architecture that matches the revenue model to the user segment, the product stage, and the structural cost of serving one more chat.

This is the pillar guide. It covers every monetization model an AI chatbot app can run in 2026 — subscription, freemium plus ads, usage-based, licensing, affiliate — with honest per-model economics, the stage at which each starts to matter, and a decision matrix that tells you where to start if you're building today. It skews toward the consumer chat case because that's where the economics are hardest, but the prosumer and B2B sections are explicit about where the playbook diverges.

The short version: for consumer chat apps, freemium-plus-ads is the structural answer. For prosumer tools, hard-paywall subscription wins. For developer-facing wrappers, usage-based fits the cost shape. Licensing is underrated at every stage. Everything else is a combination of these four primitives.

What does "monetize an AI chatbot app" actually mean in 2026?

Monetizing an AI chatbot app means generating enough revenue per user to cover the marginal inference cost of serving that user and contribute to fixed costs and growth. In 2026, that math has two new variables: GPU cost per conversation is unusually high relative to traditional app unit economics, and AI apps churn faster than non-AI apps by a meaningful margin.

The first variable forces a revenue model that scales with consumption, not just with sign-ups. The second forces a model that can monetize users quickly before they leave. The combination explains why pure-subscription freemium — the default model for consumer apps in the 2015–2023 era — breaks down on AI chat products. Subscription alone monetizes a tiny fraction of users at a tiny fraction of sessions.

Monetization in 2026 also means choosing between models that capture intent (ads, affiliate, licensing) versus models that capture willingness-to-pay (subscription, usage-based). Chat apps sit in an unusually good position to run both because every prompt is a structured intent signal — which is exactly why ad networks like Thrad's AI ad platform for publishers have built the supply-side infrastructure for chat and LLM apps to plug into paid demand without building their own ad operation.

Why does monetization matter more for AI apps than for classic mobile apps?

Every chat costs money. A classic mobile game or utility app serves most of its free users at near-zero marginal cost — CDN bandwidth, database reads, maybe a push notification. An AI chatbot app spends real GPU-seconds on every session. That structural difference changes the math of free users from "marketing expense" to "variable cost center," and it's why monetization has to be designed in, not bolted on.

RevenueCat's 2026 report found that AI-powered apps generate 41% more revenue per payer than non-AI apps over twelve months, but they churn about 30% faster. Higher ARPU, weaker retention. You have to monetize faster because the window is shorter.

The downstream implication: a monetization plan that assumes traditional mobile-app LTV curves will silently underperform. Modeling has to use AI-app retention benchmarks, price in shorter payback periods, and preserve the option to monetize free users directly (via ads or affiliate) rather than waiting on a conversion event that most of them will never hit.

Here's the quick reference for how the economics differ between a classic free app, an AI chat app, and a premium prosumer AI tool:

Dimension | Classic free mobile app | AI consumer chat app | Prosumer AI tool |

|---|---|---|---|

Marginal cost per session | Near zero | $0.005–$0.10+ | $0.05–$1.00+ |

Free-user monetization | Optional | Required | Optional |

Day-35 trial-to-paid | 2–3% freemium | 2–5% freemium | 10–15% hard paywall |

Median ARPU blended | $0.10–$1 | $1–$4 | $15–$30 |

Churn (relative) | Baseline | ~1.3x baseline | ~1.1x baseline |

Ad suitability | Already baked in | Emerging (2025+) | Poor fit |

Licensing suitability | Rare | Strong (content + intent) | Moderate |

The takeaway is that classic free-to-play monetization intuitions are wrong for AI. Free users are not loss leaders converting slowly uphill; they are a cost center that must be directly monetized or aggressively capped.

How do subscriptions work as a monetization model for AI chat apps?

Subscriptions work well as the primary revenue line for AI chat apps when the product produces a clear, repeated artifact that users save and return to — research summaries, code generation, long-form writing, structured analysis. They work poorly as a sole revenue line when most users chat casually and never hit a moment where they would pay to keep going.

The economics are dictated by two numbers: conversion rate from free to paid, and churn. RevenueCat's 2026 State of Subscription Apps report puts the median freemium day-35 trial-to-paid conversion at 2.1%, versus 10.7% for hard paywalls — roughly 5x better on the hard paywall. But hard paywalls have narrower funnels. The winning subscription model is the one that matches your audience's intent: if users arrive knowing they want the artifact, hard paywall. If they arrive curious, freemium with a clear value unlock.

For AI chat apps specifically, the subscription models that are earning real revenue in 2026 cluster into three patterns:

Flat-tier premium ($10–$20/mo). ChatGPT Plus at $20/mo is the

reference point. Works when the premium offers meaningful capacity,

model access, or speed vs the free tier. About 15M ChatGPT Plus

subscribers as of mid-2025 per Sacra data.Prosumer / Pro tier ($30–$200/mo). Claude Pro, ChatGPT Pro at

$200/mo, Perplexity Pro. Targets users whose work output depends on

the tool — researchers, consultants, developers, writers — and

who treat the spend as a business expense.Team / small business ($25–60/seat/mo). Multi-seat plans with

shared workspaces, admin controls, and higher usage ceilings.

Higher LTV per account, longer contracts, lower churn.

Subscription alone works for tool-shaped products where the median user session ends in a save-able output. Subscription alone fails for companion-shaped products where users chat for entertainment, emotional support, or casual exploration — the session ends and they close the app.

What is the freemium-plus-ads model and why does it win for consumer chat?

Freemium-plus-ads is a hybrid model where free users see contextual ads in exchange for free access, and paid subscribers pay to remove them. It wins for consumer chat apps in 2026 because it is the only structure that monetizes the ~95% of users who will never subscribe while still offering a clean paid path for the ~5% who value ad-free.

The signal is OpenAI's February 2026 launch of ads inside the free and $8/mo Go tiers of ChatGPT. By six weeks in, annualized ad revenue had crossed $100M, per reporting from Humai and others. That number is not a ceiling — it's a first-quarter ramp on the largest consumer AI app in the world. OpenAI's own projections shared with investors target $2.5B in 2026 ad revenue, $11B in 2027, and $25B by 2028. Every consumer chat app with a meaningful free user base now has a template to follow.

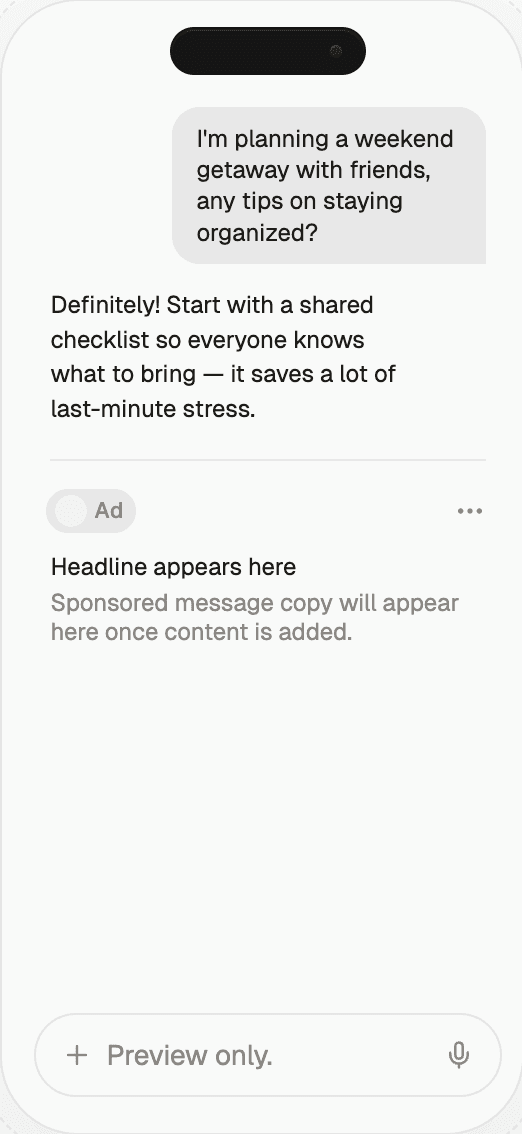

The model works because chat is a structured intent surface. Every prompt is a hand-raise: the user has told you what they want. That makes conversational ad inventory uniquely high-intent — closer to a Google search ad than a Facebook display impression — and it's why Thrad's AI ad marketplace infrastructure positions itself as a publisher-side platform for AI app builders who want ad revenue without building their own sales team, intent classifier, brand-safety stack, or direct advertiser relationships.

Here's the freemium-plus-ads economics template an AI chat app should run through before committing:

Input | Low case | Middle case | Aggressive case |

|---|---|---|---|

WAU | 100,000 | 500,000 | 2,000,000 |

Prompts per WAU / week | 10 | 20 | 30 |

Ad-eligible prompts | 15% | 25% | 35% |

Fill rate | 60% | 75% | 90% |

RPM on ad-eligible | $5 | $15 | $30 |

Weekly ad revenue | ~$450 | ~$28K | ~$567K |

Annualized ad revenue | ~$23K | ~$1.5M | ~$29.5M |

Those numbers are illustrative — real RPMs vary widely by vertical, creative, and network — but they show why the model is the structural winner for consumer chat. A single-model freemium subscription would convert 2–5% of those same WAUs at a $10–$20 price, which is meaningfully less than the ad line even in the middle case. Most apps should run both simultaneously.

Consumer AI chat apps that skip ads are leaving the single largest revenue line on the table. Subscription converts a minority of users; ads monetize the majority; combined, the two produce structurally higher total revenue than either alone.

When should an AI chat app turn on ads?

Turn on ads once you hit three conditions: (1) WAU is high enough that even low fill rates produce material revenue (typically 50K+ WAUs, though ad networks set their own floors), (2) inference cost per free user exceeds what you are capturing via subscription on that user, and (3) you have a defensible ad format that doesn't degrade the chat experience.

Condition three matters because poorly placed ads destroy retention. Well-designed conversational ads — inline sponsored suggestions, contextual product cards after a relevant answer, sponsored follow-up questions — tend to produce engagement similar to organic answers because the user is already in commercial intent mode. Poorly designed ads — interstitials, mid-conversation pop-ups, fake answer cards that blur the organic/paid line — nuke both retention and trust.

How does usage-based pricing fit AI chatbot apps?

Usage-based pricing charges per unit consumed — tokens, credits, compute time, queries, or workflows completed. It fits AI chatbot apps when usage varies widely between light and heavy users, and it fits developer-facing chat wrappers better than consumer-facing apps. Per Metronome's 2026 analysis, 77% of the largest software companies now include consumption-based pricing in their revenue mix, usually as part of a hybrid structure.

The structural logic: your cost of serving a free user scales with their usage. Flat subscription decouples revenue from cost in a way that works when cost is near-zero but breaks when cost is $0.01–$1.00 per session. Usage-based re-couples them. The user who sends 500 prompts a day generates real cost; you need to either cap them or charge them for it.

Implementation patterns that work in 2026:

Credit pools. Allocate N credits per month in the subscription

base; credits deplete by input/output token count. Common among

prosumer AI tools. Cursor, ElevenLabs, and Perplexity all run

variants.Metered overage. Flat base price with metered charges above a

usage threshold. Predictable floor, protected downside.Pay-as-you-go. No subscription; users top up. Works for

occasional, high-intent usage. API-wrapper products often start

here.Hybrid. Subscription plus usage-based overage plus API access.

The pattern across the top AI SaaS companies.

Usage-based pricing is a lever, not a model. Most AI chat apps running it also run a subscription tier with included credits and an ad-supported free tier, so the three-layer stack becomes: free (ads) → subscription (bundled credits) → overage (metered). That's the structural answer for any chat app whose power users consume 10–100x more than median users.

How does licensing work as a revenue line for AI chatbot apps?

Licensing is the underrated fourth pillar. Licensing means charging a third party — a publisher, a data partner, a brand, an enterprise — for access to your chatbot's output, the users' intent data (with consent), the app's distribution, or a custom version of the product. It generates material revenue on favorable terms because the customer is a business that can absorb five- and six-figure deals without churn risk.

Three licensing patterns are live in 2026:

Output licensing. A publisher or brand pays to have their

content cited, surfaced, or referenced in your chatbot's answers

under clearly disclosed terms. Related to sponsored placements

but structured as a longer-term content partnership. OpenAI's

publisher deals (NYT, News Corp, Axel Springer, etc.) are the

category's reference.Vertical / enterprise licensing. A company licenses your

chatbot engine to power their branded assistant. You get upfront

fees plus per-seat or per-query pricing. Common in customer

support, legal research, and B2B SaaS.Data licensing. Aggregated, anonymized, privacy-compliant

signals about what users are asking, trending topics, or

category-level intent. Market research firms and media

intelligence players are early buyers.

Licensing is not a launch-day revenue line — it requires product maturity, legal infrastructure, and a known customer segment — but for chat apps with a real audience and a distinctive dataset, it often ends up being the single highest-margin revenue stream in the mix.

How does affiliate monetization work inside a chatbot?

Affiliate monetization pays the chatbot app a commission when a user clicks through to a partner site and completes an action — a purchase, a signup, a booking. Inside a chatbot, it usually shows up as product recommendations, comparison answers, or sponsored follow-up suggestions that link to affiliate partner URLs.

Affiliate is the easiest revenue line to turn on (existing programs like Amazon Associates, Skimlinks, Impact) and often the lowest yielding per impression compared to direct ads. It works best as a supplementary layer on top of ads and subscription, not as the sole revenue model. The category where affiliate punches above its weight is commerce-intent chat — shopping assistants, travel planning bots, financial product comparison — where conversion intent is high and affiliate commissions are meaningful (2–15%+ of transaction value).

Key implementation notes:

Disclosure is non-negotiable. FTC guidance applies. Every

affiliate link must be labeled.Quality of recommendation matters more than quantity. A single

well-matched affiliate suggestion beats five mediocre ones on both

revenue and retention.Measure against the non-affiliate counterfactual. If your

chatbot answered the same question without the affiliate link,

would the user still convert? If yes, you're cannibalizing your

own organic experience.

Affiliate often pairs naturally with a direct ad network. The ad network handles display inventory; the affiliate layer handles deeper funnel conversion events. Many AI chat apps run both, attributing revenue to whichever model touched the user's path last.

Which monetization model fits which stage of the app?

Stage matters more than model. A pre-revenue chatbot app with 1,000 daily users is not the same business as a post-PMF app with 500K weekly users. The monetization architecture that fits one is actively wrong for the other. Here's the decision matrix by stage.

Stage | DAU / WAU | Primary goal | Best-fit model | Why |

|---|---|---|---|---|

Pre-revenue | <10K | Validate willingness-to-pay | Light paywall experiments, early subscription | Ads have no scale; licensing has no leverage |

Early growth | 10K–100K WAU | Prove unit economics | Freemium + subscription | Subscription volume too thin for ads alone |

Scaling | 100K–1M WAU | Cover inference costs | Add ads, introduce usage tiers | Free tier volume now sustains ad inventory |

Scale | 1M+ WAU | Maximize revenue per user | Full hybrid: ads + subscription + usage + affiliate + licensing | All models fire; sequence drops off the table |

Mature | 10M+ WAU | Defend margin | Add licensing, enterprise tier | Output and distribution now have premium value |

The pattern: monetization models stack additively as the app scales, not substitutively. An app at 500K WAUs running ads plus subscription plus usage-based will out-revenue the same app running only one. The complexity cost is real — billing infrastructure, product surface trade-offs, support overhead — but the revenue compounds faster than the complexity.

The trap: starting with the hardest model first. Teams that chase enterprise licensing at 10K WAU usually don't have the deal flow to close contracts. Teams that turn on ads at 2K WAU usually don't have the inventory to attract advertisers. Match the model to the stage.

What does the decision matrix look like by user segment?

User segment determines which monetization models are even plausible. A companion chatbot and an enterprise research assistant are both AI chat apps but have almost nothing in common on the revenue side. Here's the segment-specific view.

Segment | Example products | Primary revenue | Secondary | Avoid |

|---|---|---|---|---|

Consumer / companion | Replika, Character.AI | Freemium + ads | Affiliate, IAP | Heavy usage-based (confusing to casual users) |

Consumer / utility | ChatGPT, Copilot, Gemini | Ads + subscription | Licensing, affiliate | Seat-based pricing |

Prosumer / creator | Claude Pro, Perplexity Pro | Subscription (hard paywall) | Usage-based overage | Low-end ads (hurts premium positioning) |

Developer / API wrapper | Cursor, Replit Agent | Usage-based (tokens/credits) | Subscription base | Pure ad-supported (developer trust tanks) |

B2B / enterprise | Harvey, Glean, vertical SaaS | Seat-based subscription | Enterprise licensing | Ads (kills enterprise deals) |

Shopping / commerce | Vertical shopping agents | Affiliate + ads | Subscription | Pure subscription (volume not there) |

The practical implication: pick the primary model from the segment row, then layer secondary models as the stage progresses. Don't invert — an enterprise chatbot running ads is an enterprise chatbot without enterprise customers.

What are the real numbers on ChatGPT's monetization stack?

ChatGPT is the only AI chatbot app at hyperscale in 2026, so its monetization stack is the single best reference point for what the numbers look like at scale. OpenAI's annualized revenue crossed $25B by end of February 2026 per Sacra and Business of Apps. That breaks down across four buckets: consumer subscriptions, API, enterprise/team, and the new ad layer.

Approximate shape of the 2026 mix (directional — OpenAI doesn't publish audited breakdowns):

Revenue line | Approx 2026 annualized | Notes |

|---|---|---|

Consumer subscriptions (Plus + Pro + Go) | ~$10–12B | ~50M total subscribers; $17B+ projected by OpenAI for 2026 consumer total |

API | ~$5–7B | Developers + businesses building on GPT models |

Enterprise + Team | ~$3–5B | Custom / seat-based |

Advertising | ~$2.5B (projected) | $100M annualized six weeks after launch |

Other (licensing, partnerships) | Low billions | OEM deals, Apple integration, publisher relationships |

The lesson for an AI chatbot app builder: even at the category's apex, no single revenue line carries the full business. Consumer subs are the biggest today, but ads is the fastest-growing, API is the highest-margin, and enterprise is the stickiest. The monetization stack is what scales. Single-model businesses get outcompeted by multi-model businesses at the same scale.

What tools and infrastructure are required to run each model?

Different models have different infrastructure dependencies. The cost of that infrastructure — build vs buy, time to first revenue, team skills required — often determines which model a small team can actually execute. Here's the build-vs-buy breakdown.

Subscription

Build time: 1–2 weeks with Stripe or Paddle. Buy infrastructure: RevenueCat, Paddle, Stripe Billing, Recurly. Ongoing cost: 2–5% of revenue. Engineering lift: low. This is the easiest model to turn on.

Advertising

Build time: 3–6 months to build your own demand side (not recommended). Buy infrastructure: AI-native ad networks (examples include Thrad's AI ad network for AI app publishers) that handle intent classification, brand safety, demand aggregation, creative QA, and settlement. Ongoing cost: typical revenue share 30–50%. Engineering lift: low on the buy path (SDK / API integration), extreme on the build path. A look at the live AI-assistant ad gallery shows what shipped creative looks like and why format variety matters for inventory value.

Usage-based

Build time: 4–8 weeks for a real metering + billing system. Buy infrastructure: Metronome (now Stripe), Orb, Flexprice, Vayu. Ongoing cost: per-event or flat platform fee. Engineering lift: moderate — usage metering touches every part of the product.

Licensing

Build time: months to years depending on deal complexity. Buy infrastructure: enterprise contract tooling (DocuSign, Ironclad), legal review, custom integrations. Engineering lift: bespoke per deal. Cost: legal + sales overhead.

Affiliate

Build time: days. Buy infrastructure: affiliate networks (Amazon Associates, Impact, Skimlinks). Engineering lift: minimal — link-routing and attribution.

The sequencing implication: turn on subscription and affiliate early (cheap), add ads once WAU supports it (buy infra, don't build), layer usage-based as power-user monetization matures, pursue licensing as a 2–3 year bet.

What monetization mistakes kill AI chatbot apps?

Five mistakes kill AI chatbot app economics in 2026. Each is recoverable early, painful to fix late.

Free tier with no cost cap. Letting free users consume

unlimited inference while paying $0 is how pre-revenue AI apps

burn through runway. Solution: cap free usage (messages/day,

tokens/session, or total monthly), or convert free to

ad-supported so every free session generates some revenue.Subscription-only at consumer scale. A $10/mo subscription

converting 2% of free users leaves 98% of usage unmonetized.

Solution: freemium plus ads. That's the whole point of this

article.Copying ChatGPT's price point without ChatGPT's model quality.

$20/mo is a price anchored in a specific product. Your app's

willingness-to-pay is whatever your users will pay for your

specific value. Test it.Hardcoding a single billing vendor. Vendor lock-in is painful.

Abstract billing early so moving from Stripe to Paddle to Metronome

is a swap, not a rewrite.Ignoring licensing until late. B2B licensing deals have long

sales cycles. Start conversations early even if you don't close

deals for 12 months. The relationship-building is the work.

Common misconceptions

"Ads will tank retention." They will if you do it wrong.

Well-placed contextual ads on commercial-intent prompts have

shown neutral-to-positive retention impact in pilots because the

ad is itself useful to the user. Poor placement destroys

retention; good placement doesn't."We'll figure monetization out after PMF." Every week of unmonetized

scale burns runway. PMF and monetization are the same problem —

the product works if the revenue model works."Subscription is enough if we charge more." Doubling a $10 tier

to $20 doesn't double revenue if the market caps willingness-to-pay

below $20. It just cuts conversion."We'll build our own ad stack." Ad infrastructure is a

many-years-many-engineers investment. Use a network. Build the

product.

What comes next for AI chatbot app monetization?

Four trends to track through 2027:

Ad-supported free tiers become table stakes. Every serious

consumer AI chatbot will run ads by end of 2027. The ones that

don't will either have licensed enterprise backing or be

subsidized research projects.Conversational commerce matures. Agentic checkout inside chat

(buying a product without leaving the conversation) becomes a

real revenue channel with meaningful attribution. ChatGPT's

Instant Checkout and Perplexity's shopping integrations are the

first wave.Outcome-based pricing pilots expand. Charging for a completed

task (a booked flight, a drafted contract, a closed ticket)

replaces charging per token in specific verticals. Agentic

products push this first.Cross-app ad networks consolidate. Just as mobile app

advertising consolidated on a handful of ad networks in 2012–2015,

AI-app ad networks consolidate in 2026–2028 into a small number

of players with cross-app inventory, unified measurement, and

standardized formats.

How to get started monetizing an AI chatbot app

Follow the sequence by stage, not by model preference. If you're pre-revenue, validate willingness-to-pay with a light paywall experiment before building anything else. If you're early growth, layer in a real subscription tier with clear premium unlock. If you're scaling, turn on ads — that's where the revenue is.

A concrete 90-day monetization plan for a consumer AI chatbot app at 100K+ WAU:

Week 1–2. Cap free usage. Pick a number — 20 messages/day,

50K tokens/month, something. Stop bleeding.Week 3–4. Ship a subscription tier. Keep it simple: one price,

ad-free, higher usage cap. Target 3–5% conversion.Week 5–8. Integrate an AI-native ad network on the free tier.

Start with a single unit type (sponsored suggestion, contextual

product card) and measure retention impact.Week 9–12. Layer affiliate on top for commerce-intent prompts.

Model usage-based overage for the subscription tier if power-user

behavior warrants it.

Thrad handles the supply-side ad infrastructure for AI chat and LLM app publishers — contextual intent classification, brand safety, demand aggregation across advertisers, and transparent revenue share — so the ad line on the plan above becomes an integration, not a build project. If you're an AI app builder with WAUs and no ad revenue, that's the gap worth closing first.

ai chatbot monetization, llm app revenue, ai app business model, freemium ai, chatbot ads, ai app subscription

Citations:

Business of Apps, "ChatGPT Revenue and Usage Statistics 2026." https://www.businessofapps.com/data/chatgpt-statistics/

RevenueCat, "State of Subscription Apps 2026." https://www.revenuecat.com/state-of-subscription-apps/

RevenueCat, "Why hybrid monetization is the default model for subscription apps in 2026." https://www.revenuecat.com/blog/growth/ai-hybrid-monetization/

Metronome, "2026 Trends From Cataloging 50+ AI Pricing Models." https://metronome.com/blog/2026-trends-from-cataloging-50-ai-pricing-models

PPC Land, "AI apps earn 41% more per user but churn 30% faster, RevenueCat finds." https://ppc.land/ai-apps-earn-41-more-per-user-but-churn-30-faster-revenuecat-finds/

Humai, "ChatGPT Is Running Ads. It Hit $100 Million in Six Weeks." https://www.humai.blog/chatgpt-is-running-ads-it-hit-100-million-in-six-weeks-openai-wants-100-billion-by-2030/

Sacra, "OpenAI revenue, valuation and funding." https://sacra.com/c/openai/

Business of Apps, "Will Generative AI apps remain a revenue powerhouse in 2026?" https://www.businessofapps.com/insights/will-generative-ai-apps-remain-a-revenue-powerhouse-in-2026/

Sensor Tower, "2026 State of Mobile: AI Moves Mobile into Its Next Phase." https://sensortower.com/blog/state-of-mobile-2026

Be present when decisions are made

Traditional media captures attention.

Conversational media captures intent.

With Thrad, your brand reaches users in their deepest moments of research, evaluation, and purchase consideration — when influence matters most.